Fairness in machine learning is no longer a niche concern—it’s the heartbeat of ethical AI. As algorithms influence hiring decisions, loan approvals, healthcare predictions, and even criminal sentencing, the question isn’t just whether models perform accurately. It’s whether they perform fairly. But what does fairness really mean in this context, and how can it be measured, designed, and enforced?

Let’s unpack this complex but critical issue, from identifying bias to embedding fairness into every layer of the machine learning pipeline.

Understanding Fairness in Machine Learning

At its core, fairness in machine learning refers to the absence of unjust bias in algorithmic outcomes. A fair system ensures that predictions or decisions do not systematically favor or disadvantage specific individuals or groups, especially based on attributes like gender, race, age, or socioeconomic background.

Machine learning models learn from data—and data reflects the world as it is, not necessarily as it should be. Historical data may encode societal biases, which, when learned by algorithms, can perpetuate discrimination. Fairness efforts aim to break this cycle.

For example, imagine a loan approval algorithm trained on historical applications. If the dataset contains fewer approvals for a particular ethnic group due to past discrimination, the model may unintentionally reproduce that bias. This is how machine learning can reinforce inequality, even when developers have no malicious intent.

The Dimensions of Algorithmic Fairness

Fairness in machine learning isn’t one-size-fits-all. Researchers have proposed several definitions—each addressing a different ethical perspective. Let’s explore a few major ones.

1. Demographic Parity

This principle states that outcomes should be distributed equally across groups. For instance, if 50% of all applicants get approved for a loan, roughly 50% of each demographic subgroup should also be approved. However, this approach doesn’t consider differences in qualification rates—it only focuses on equal results.

2. Equal Opportunity

Equal opportunity fairness requires that individuals who are truly qualified have an equal chance of receiving a positive outcome, regardless of group membership. It prioritizes equal true positive rates across demographics. This strikes a balance between fairness and model accuracy.

3. Predictive Parity

This form of fairness ensures that predictions are equally reliable across groups. In other words, if an algorithm predicts “likely to repay” for two people from different demographics, both predictions should be equally trustworthy.

4. Individual Fairness

Instead of focusing on groups, individual fairness demands that similar individuals be treated similarly. If two applicants have equivalent profiles, their predicted outcomes should also align.

Each definition has trade-offs. Improving one kind of fairness can reduce another, making it essential for organizations to decide which type best aligns with their ethical goals.

Sources of Bias in Machine Learning

Bias is the enemy of fairness, and it can enter a model at multiple stages—often subtly. Recognizing where it arises is the first step toward correction.

1. Data Collection Bias

Data mirrors human behavior, which can be flawed. Skewed samples, missing groups, or underrepresented demographics can create biased datasets that lead to unequal predictions.

2. Label Bias

Labels—the “ground truth” used for training—can carry human subjectivity. For instance, crime prediction datasets often rely on historical arrest records, which may already be influenced by biased policing practices.

3. Feature Selection Bias

Choosing which data features to include in a model can inadvertently introduce bias. Some variables might act as proxies for protected attributes. For example, ZIP codes can indirectly reveal race or income.

4. Algorithmic Bias

Even neutral data can be processed unfairly if algorithms amplify correlations that reflect existing inequalities. Optimization for accuracy alone can worsen bias by prioritizing majority patterns over minority ones.

5. Deployment Bias

Fairness doesn’t stop at training. A model may perform well in testing but fail in real-world use if conditions differ. Continuous monitoring after deployment is essential to maintain fairness.

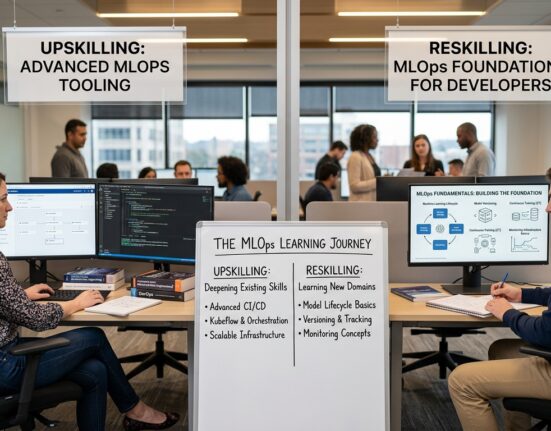

Strategies to Ensure Fairness in Machine Learning

Building fairness into machine learning isn’t a single action—it’s a continuous commitment involving design, training, testing, and monitoring. Here’s how developers and data scientists can make fairness a tangible goal.

1. Collect Representative and Inclusive Data

Fairness begins with the dataset. Strive for balanced representation across all relevant groups. If certain demographics are underrepresented, use data augmentation or targeted sampling to fill gaps. Diverse data helps models learn equitable patterns.

2. Apply Preprocessing Techniques

Before training, preprocessing can detect and correct imbalances. Techniques like reweighing assign different weights to data samples, while resampling ensures balanced class distributions. These methods align the dataset closer to fairness criteria.

3. Use Fairness-Aware Algorithms

Researchers have developed algorithms designed with fairness constraints. Examples include:

- Adversarial Debiasing: Trains a model to make accurate predictions while preventing a secondary model from predicting protected attributes.

- Fair Representation Learning: Encodes data so that sensitive information is minimized during decision-making.

These algorithms blend accuracy and fairness through optimization techniques that penalize discriminatory outcomes.

4. Monitor Metrics for Fairness

Just as accuracy has metrics like precision and recall, fairness also has quantitative measures. Developers should evaluate models using:

- Demographic parity difference

- Equal opportunity difference

- Statistical parity

- Disparate impact ratio

Tracking these metrics ensures that fairness is measurable, not just theoretical.

5. Post-Processing Adjustments

Sometimes, fairness corrections are applied after model training. Post-processing methods adjust predictions to reduce disparities. For instance, adjusting thresholds separately for different groups can equalize outcomes without retraining the model.

6. Involve Diverse Human Oversight

Human review helps detect fairness issues that metrics might miss. Including diverse perspectives—especially from affected communities—can reveal blind spots in design and testing.

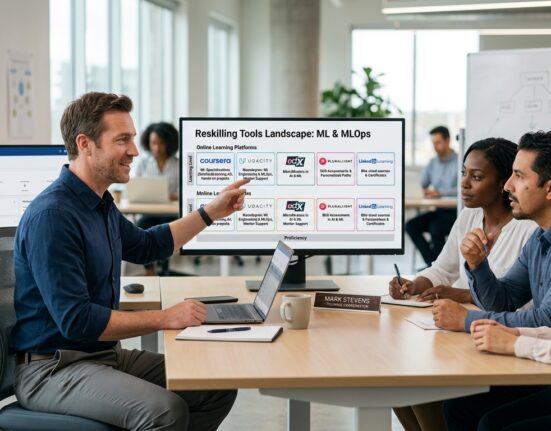

The Role of Explainability in Fairness

Transparency and fairness go hand in hand. If a machine learning model’s decisions can’t be explained, it’s nearly impossible to ensure fairness. Explainable AI (XAI) provides insight into why a model makes certain predictions, allowing developers and users to spot potential bias.

Techniques like SHAP (Shapley Additive Explanations) or LIME (Local Interpretable Model-Agnostic Explanations) help identify which features most influence decisions. For example, if a credit scoring model heavily relies on ZIP code, explainability tools can flag this as a fairness concern.

Moreover, explainability builds trust. When stakeholders understand how and why an algorithm behaves, they are more likely to view it as fair and reliable.

Ethical and Regulatory Perspectives

Beyond technical challenges, fairness is deeply rooted in ethics and law. Regulations worldwide are catching up with the speed of AI innovation.

1. Legal Frameworks

- GDPR (General Data Protection Regulation) emphasizes transparency, data protection, and the right to explanation for automated decisions.

- EU AI Act proposes risk-based regulation to ensure fairness and accountability in high-impact AI systems.

- US AI Bill of Rights (Draft) outlines principles for preventing algorithmic discrimination and ensuring human oversight.

These laws reinforce that fairness isn’t optional—it’s a legal and ethical requirement.

2. Ethical AI Principles

Companies are also adopting internal AI ethics policies that prioritize fairness, transparency, and accountability. These frameworks guide model development and deployment in line with human values.

Challenges in Measuring and Enforcing Fairness

Despite progress, ensuring fairness remains complex. Different fairness definitions can conflict—optimizing for one can harm another. Moreover, real-world trade-offs between accuracy, interpretability, and fairness must be managed carefully.

Another challenge is context. Fairness depends on societal norms, and what’s fair in one domain may not be in another. A medical diagnosis model may justify stricter criteria than a marketing recommendation engine.

Ultimately, fairness isn’t just a mathematical property—it’s a moral and social judgment. Technology must adapt to human values, not the other way around.

The Future of Fair Machine Learning

Fairness in machine learning is evolving rapidly. Future systems may incorporate dynamic fairness, adjusting decisions over time as data and social norms change. Collaboration between data scientists, ethicists, and policymakers will shape this future.

Furthermore, AI auditing and bias detection tools are becoming mainstream, empowering organizations to test fairness regularly. Educational programs are also emphasizing responsible AI, ensuring the next generation of engineers view fairness as foundational—not optional.

The ultimate goal? Machine learning that doesn’t just reflect society—but improves it.

Conclusion

Fairness in machine learning isn’t a feature; it’s a philosophy. By addressing bias, ensuring transparency, and designing for inclusivity, we can create algorithms that serve everyone justly. The journey toward fair AI is ongoing—but with awareness, accountability, and innovation, it’s a path worth pursuing.

FAQ

1. What does fairness mean in machine learning?

Fairness means ensuring that algorithmic decisions do not unjustly favor or disadvantage individuals or groups, especially based on protected characteristics like gender, race, or age.

2. Why is fairness important in AI systems?

Fairness builds trust, prevents discrimination, and ensures ethical AI adoption across sectors such as healthcare, finance, and employment.

3. How can we measure fairness in algorithms?

Fairness can be measured using metrics like demographic parity, equal opportunity difference, or disparate impact ratio.

4. What causes bias in machine learning?

Bias often originates from unbalanced data, subjective labels, or algorithmic design choices that unintentionally amplify societal inequalities.

5. How can fairness be improved in machine learning models?

Developers can improve fairness through inclusive data collection, bias detection tools, fairness-aware algorithms, and continuous auditing during deployment.