Many organizations built early computer vision systems using datasets collected over many years. These datasets often contain images and videos stored in inconsistent formats. As a result, computer vision data standardization has become essential for maintaining accuracy and scalability in legacy AI projects.

Without structured data, machine learning models struggle to produce reliable results. Therefore, companies must organize visual datasets before training or updating computer vision systems.

Moreover, standardized data ensures that algorithms interpret images consistently across different environments. This process helps teams improve model performance while reducing training errors.

Although legacy projects often rely on outdated data structures, organizations can modernize them without replacing entire systems.

In this guide, you will learn how computer vision data standardization helps improve legacy AI workflows, enhances model accuracy, and supports scalable computer vision applications.

Why Legacy Computer Vision Projects Need Standardization

Many early computer vision projects evolved gradually. Teams collected image and video data from different devices, departments, or sources.

Consequently, datasets often contain inconsistent labels, file formats, and metadata structures. These inconsistencies make training machine learning models more difficult.

By implementing computer vision data standardization, organizations create a unified framework for managing visual data.

Standardized datasets improve training efficiency because algorithms receive consistent input structures.

Additionally, standardized data improves collaboration between engineering teams. Developers can easily access, understand, and reuse datasets across different projects.

Another advantage involves scalability. Once organizations standardize their datasets, they can expand AI systems more easily.

Therefore, standardization forms the foundation for reliable and scalable computer vision development.

Common Challenges in Legacy Visual Data Systems

While the benefits are clear, organizations often face obstacles when modernizing old computer vision datasets.

Understanding these challenges helps teams implement effective computer vision data standardization strategies.

Inconsistent File Formats

Legacy projects frequently store images and videos in multiple formats.

For example, datasets may contain JPEG, PNG, BMP, or proprietary formats from older imaging systems.

Because machine learning pipelines require consistent input structures, engineers must convert files into standardized formats.

This step ensures compatibility across AI training workflows.

Unstructured Metadata

Metadata describes the context of visual data.

Examples include timestamps, camera specifications, and location information.

However, older datasets often store metadata inconsistently or not at all.

Effective computer vision data standardization requires clear metadata structures so AI systems can interpret images correctly.

Labeling and Annotation Issues

Machine learning models rely on labeled datasets to learn patterns.

Unfortunately, legacy datasets often contain inconsistent or incomplete annotations.

Different teams may have labeled objects using different naming conventions.

Consequently, engineers must review and correct these annotations during the standardization process.

Storage Fragmentation

Older computer vision systems frequently store datasets across multiple servers or storage devices.

This fragmentation makes data access inefficient.

Standardization initiatives often consolidate datasets into centralized storage systems for easier management.

Core Elements of Data Standardization

Organizations must implement several practices to achieve successful computer vision data standardization.

These practices ensure visual datasets remain structured, accessible, and scalable.

Unified Data Formats

Standardizing file formats simplifies machine learning workflows.

For example, many organizations convert image datasets to JPEG or PNG formats.

Similarly, video datasets often use formats such as MP4 or AVI.

By enforcing consistent formats, engineers simplify data ingestion and model training processes.

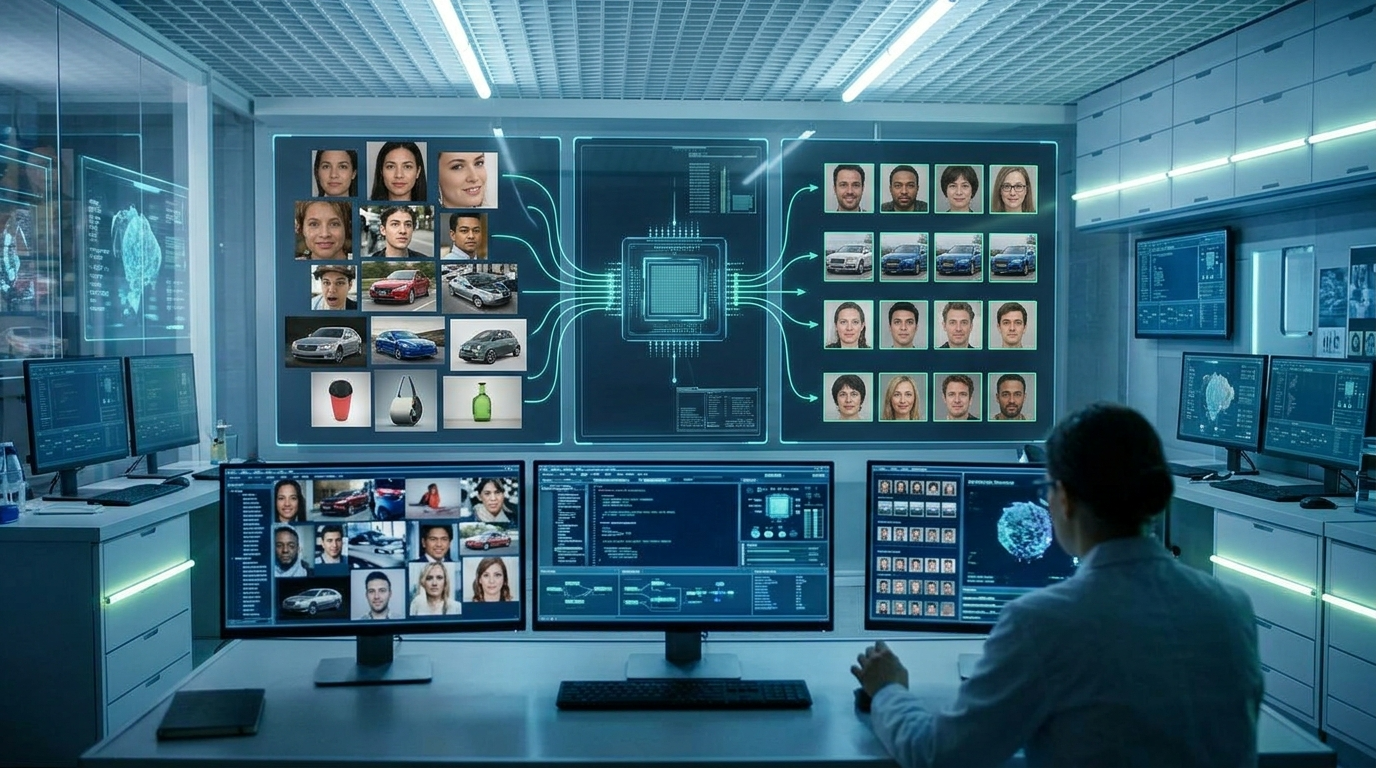

Consistent Annotation Frameworks

Annotation frameworks define how objects appear in labeled datasets.

These frameworks include bounding boxes, segmentation masks, or keypoint markers.

Clear labeling standards improve model training accuracy.

Therefore, annotation consistency plays a major role in effective computer vision data standardization.

Structured Metadata Systems

Metadata helps AI systems interpret visual information.

For example, metadata may include camera angle, lighting conditions, or environmental variables.

Structured metadata systems ensure every dataset includes consistent contextual information.

This consistency improves the reliability of machine learning models.

Version Control for Datasets

Version control systems track changes in datasets over time.

These systems allow teams to update datasets without losing historical records.

Consequently, version control improves collaboration and data management in long-term projects.

Tools for Managing Visual Data Standardization

Several tools support the process of organizing and managing large visual datasets.

These tools simplify computer vision data standardization workflows.

Data Annotation Platforms

Annotation platforms help teams label images and videos consistently.

Many tools include collaboration features that allow multiple contributors to review annotations.

Examples include open-source and enterprise labeling software.

Because these platforms enforce annotation standards, they improve dataset quality.

Data Pipeline Automation

Automation tools manage the movement and transformation of data within AI pipelines.

These systems convert file formats, validate metadata, and organize datasets automatically.

Automation significantly reduces manual work in computer vision data standardization projects.

Cloud Storage Systems

Cloud platforms offer scalable storage solutions for large datasets.

These systems provide centralized repositories that support data sharing and collaboration.

Additionally, cloud environments allow teams to process large video datasets efficiently.

Data Governance Platforms

Data governance tools enforce policies related to data usage, quality, and security.

These systems help organizations maintain consistent data standards across departments.

As a result, governance platforms support long-term computer vision data standardization initiatives.

Benefits of Standardizing Computer Vision Data

Organizations that implement computer vision data standardization gain several strategic advantages.

These benefits improve both machine learning performance and operational efficiency.

Improved Model Accuracy

Machine learning models perform best when training data follows consistent patterns.

Standardized datasets reduce noise and inconsistencies that confuse algorithms.

Consequently, models produce more reliable predictions.

Faster Training Workflows

Clean datasets accelerate model training processes.

Engineers spend less time correcting data issues and more time optimizing algorithms.

Therefore, teams can develop computer vision solutions more quickly.

Enhanced Collaboration

Standardized data structures allow teams to share datasets easily.

Developers, data scientists, and analysts can work together more efficiently.

Clear data standards improve communication across departments.

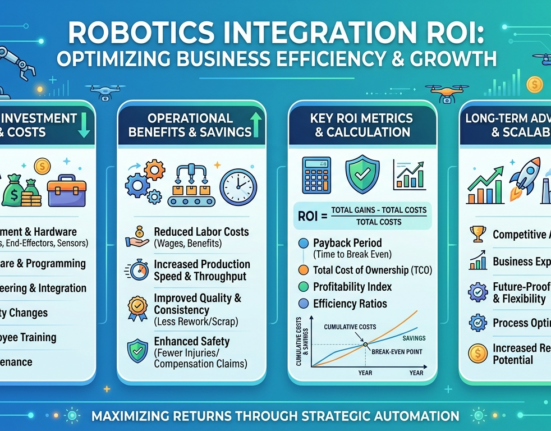

Scalable AI Infrastructure

Standardization supports scalable machine learning infrastructure.

Organizations can expand datasets and integrate new data sources without disrupting existing workflows.

As a result, AI systems become easier to maintain and upgrade.

Future Trends in Visual Data Management

Computer vision technology continues to evolve rapidly. Consequently, new approaches to computer vision data standardization are emerging.

One major trend involves automated data labeling powered by artificial intelligence.

AI-assisted annotation tools can label objects within images automatically. These tools reduce manual labeling effort and improve dataset consistency.

Another development involves synthetic data generation.

Organizations create artificial images using simulation software. These datasets help train models when real-world data is limited.

Additionally, edge computing is becoming more common in computer vision systems.

Instead of sending data to centralized servers, devices process images locally. This approach improves performance and reduces latency.

Cloud-based data management platforms are also evolving.

These platforms integrate dataset storage, labeling, and model training within a single environment.

As these technologies mature, visual data management will become more efficient and scalable.

Conclusion

Legacy computer vision systems often rely on large datasets collected over many years. While these datasets contain valuable information, they frequently suffer from inconsistent formats and labeling structures.

By implementing computer vision data standardization, organizations can transform outdated datasets into reliable AI resources.

Standardized visual data improves machine learning accuracy, simplifies training workflows, and enhances collaboration between teams.

Furthermore, structured datasets support scalable AI systems that can adapt to new technologies and applications.

Although standardization requires careful planning, the long-term benefits are substantial.

Organizations that invest in data quality today will build stronger computer vision systems for the future.

FAQ

1. Why do computer vision systems require standardized datasets?

Standardized datasets ensure machine learning models receive consistent input data, which improves accuracy and training efficiency.

2. What types of metadata are useful for visual AI datasets?

Metadata may include camera settings, timestamps, location details, lighting conditions, and environmental information.

3. Can automation help organize large image datasets?

Yes. Automated data pipelines can convert file formats, validate metadata, and organize datasets for machine learning workflows.

4. What tools help label images for machine learning?

Annotation platforms allow teams to create bounding boxes, segmentation masks, and labels for training computer vision models.

5. How does structured data improve computer vision performance?

Structured datasets reduce inconsistencies that confuse machine learning algorithms, leading to more reliable predictions.