Artificial intelligence now supports decisions in many industries. Organizations use automated systems to analyze data, predict outcomes, and guide complex processes. However, relying entirely on algorithms creates risks. Human oversight AI decisions help ensure technology operates responsibly and fairly.

AI models can process large datasets quickly. Nevertheless, machines lack human judgment and ethical awareness. Therefore, organizations must combine automation with careful supervision.

Human oversight ensures that AI systems remain transparent, accountable, and aligned with ethical standards. Furthermore, oversight helps detect bias, prevent errors, and maintain trust in automated decision-making systems.

This article explains why human supervision remains essential in modern AI environments. It also explores how organizations integrate oversight into responsible artificial intelligence strategies.

Why AI Systems Still Need Human Supervision

Artificial intelligence has improved significantly in recent years. Algorithms now support tasks such as medical diagnostics, financial forecasting, and fraud detection. Despite these advancements, automated systems still face limitations.

First, AI models rely on historical data. If that data contains bias or inaccuracies, the system may produce flawed outcomes.

Second, algorithms cannot always understand context. Human situations often involve ethical or social factors that machines cannot fully interpret.

Third, unexpected edge cases may appear during real-world deployment. Without supervision, systems may respond incorrectly.

Because of these risks, human oversight AI decisions remain essential. Human experts review results, verify conclusions, and intervene when necessary.

Moreover, oversight ensures that organizations maintain control over critical decisions rather than delegating authority entirely to machines.

Understanding the Limits of Automated Decision Making

Artificial intelligence excels at pattern recognition and statistical analysis. However, complex decision environments often require judgment beyond data patterns.

Machines cannot interpret values such as fairness, empathy, or social responsibility.

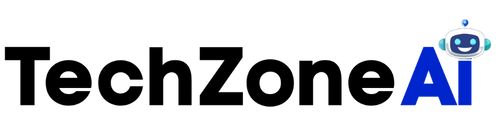

For example, an automated hiring system might prioritize efficiency. Yet hiring decisions also involve cultural fit, personal experience, and ethical considerations.

Similarly, healthcare algorithms can analyze medical images quickly. However, doctors must still evaluate patient history and treatment implications.

Human oversight AI decisions bridge this gap between automated analysis and responsible decision making.

Human experts evaluate whether AI recommendations align with ethical guidelines and real-world context.

Consequently, organizations achieve better outcomes when they combine machine efficiency with human insight.

Key Roles of Human Oversight in AI Systems

Human supervision plays several important roles in artificial intelligence environments.

Oversight ensures reliability, fairness, and accountability across automated processes.

Monitoring Algorithm Performance

AI models can change behavior over time as data evolves.

Human reviewers monitor system outputs and detect unusual patterns.

If the system begins producing inaccurate predictions, experts can investigate the cause.

This monitoring process supports reliable human oversight AI decisions across long-term deployments.

Correcting Model Errors

Even well-trained models occasionally produce incorrect outputs.

Human reviewers identify these mistakes and adjust the system accordingly.

Sometimes corrections involve retraining models with updated data.

Other times teams refine model parameters or decision thresholds.

Through this process, oversight improves both accuracy and reliability.

Ensuring Ethical Compliance

Ethical concerns remain central to AI deployment.

Algorithms may unintentionally reinforce social bias or discrimination.

Human oversight ensures that automated outcomes comply with ethical standards.

Organizations can review decisions for fairness and transparency before implementation.

As a result, human oversight AI decisions help protect individuals and communities from unfair automated outcomes.

How Oversight Reduces Bias in AI Systems

Bias in artificial intelligence remains one of the most serious concerns in modern technology.

Machine learning models learn patterns from historical data. If the dataset contains biased information, the algorithm may reproduce those patterns.

Human supervision plays a critical role in identifying and correcting these issues.

Auditing Training Data

Experts review training datasets to identify potential imbalances.

For example, hiring datasets might contain gender or racial disparities.

Data audits help teams adjust training data before models learn problematic patterns.

Therefore, human oversight AI decisions help reduce bias at the earliest stages of development.

Evaluating Model Outputs

Even balanced datasets may produce biased outcomes due to algorithm design.

Human analysts review predictions and examine patterns across demographic groups.

If disparities appear, teams can investigate the cause.

This evaluation ensures models operate fairly across diverse populations.

Implementing Fairness Controls

Developers can integrate fairness constraints into machine learning models.

Human oversight ensures these controls remain effective.

Continuous evaluation helps maintain balanced decision outcomes as new data emerges.

Consequently, oversight strengthens ethical AI deployment.

Industries Where Human Oversight Is Critical

Human supervision becomes especially important in high-impact industries.

These sectors involve decisions that directly affect people’s lives.

Healthcare and Medical AI

Medical AI systems assist doctors in diagnosing diseases and recommending treatments.

Although algorithms analyze medical data efficiently, healthcare decisions remain complex.

Doctors must evaluate patient history, symptoms, and potential risks.

Human oversight AI decisions ensure medical recommendations remain safe and personalized.

Healthcare professionals confirm that AI suggestions align with clinical knowledge.

Financial Services

Banks use AI to evaluate loan applications and detect fraud.

These systems analyze credit history, financial behavior, and transaction patterns.

However, financial decisions affect individuals and businesses significantly.

Human reviewers verify whether automated conclusions remain fair and accurate.

Oversight also helps financial institutions meet regulatory requirements.

Legal and Government Systems

Governments increasingly use AI for policy analysis and risk assessment.

Legal systems may use predictive tools to support case evaluations.

However, legal decisions require careful consideration of rights and fairness.

Human oversight AI decisions ensure automated tools support rather than replace legal judgment.

Methods for Implementing Effective Oversight

Organizations must design structured oversight processes.

Simply reviewing occasional results is not enough.

Instead, oversight should integrate directly into AI workflows.

Human-in-the-Loop Systems

Human-in-the-loop models require human approval before final decisions occur.

For example, AI may generate recommendations while humans confirm the outcome.

This approach balances efficiency with responsibility.

Human oversight AI decisions become part of the system design rather than an afterthought.

Human-on-the-Loop Monitoring

In some systems, humans supervise automated processes continuously.

They monitor system behavior and intervene when anomalies appear.

This method works well for high-speed systems such as financial trading or cybersecurity monitoring.

Human operators maintain control even when automation performs most tasks.

Regular Model Audits

Periodic audits evaluate algorithm behavior over time.

Auditors review datasets, model performance, and decision outcomes.

Regular evaluation ensures models remain aligned with ethical and regulatory standards.

Audits strengthen long-term accountability in automated decision environments.

Challenges in Maintaining Effective Oversight

While oversight provides many benefits, implementing it effectively can be challenging.

Organizations must balance efficiency with supervision.

Scalability Issues

AI systems often operate at large scale.

Reviewing every automated decision may not be practical.

Organizations therefore design selective review processes.

For instance, humans may review high-risk decisions while automation handles routine cases.

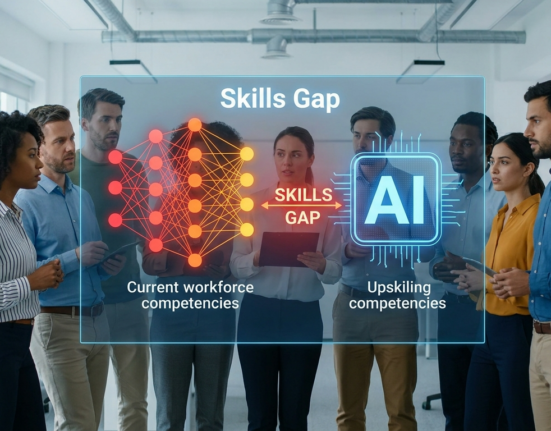

Skill Requirements

Effective oversight requires skilled professionals.

Reviewers must understand both technology and ethical considerations.

Training programs help employees develop these capabilities.

As AI adoption increases, demand for AI governance experts continues to grow.

Operational Costs

Oversight processes require time and resources.

However, the cost of ignoring oversight can be far greater.

Incorrect decisions may lead to legal issues, reputational damage, or financial loss.

Therefore, investing in human oversight AI decisions protects organizations from serious risks.

Best Practices for Responsible AI Governance

Organizations can follow several strategies to strengthen oversight systems.

These practices ensure responsible use of artificial intelligence technologies.

Create Clear Accountability Structures

Define who is responsible for reviewing AI decisions.

Clear accountability improves governance and prevents oversight gaps.

Organizations should assign roles for monitoring, auditing, and policy enforcement.

Develop Transparent Documentation

Documentation explains how AI systems operate.

Teams should record data sources, training processes, and decision logic.

Transparent documentation helps reviewers understand model behavior quickly.

Encourage Interdisciplinary Collaboration

AI governance requires collaboration between engineers, ethicists, and domain experts.

Each group contributes unique insights.

Together they ensure systems operate responsibly.

Through collaboration, human oversight AI decisions remain balanced and effective.

Implement Continuous Monitoring

AI models change over time as data evolves.

Continuous monitoring helps detect shifts in behavior.

Teams can respond quickly if systems begin producing unexpected results.

Regular monitoring ensures automated systems remain reliable.

The Future of Human-AI Collaboration

Artificial intelligence will continue transforming industries.

Automation will handle more tasks, analyze more data, and support complex decisions.

However, human judgment will remain essential.

Human oversight AI decisions ensure technology supports human values rather than replacing them.

Future systems will likely emphasize collaboration between humans and machines.

AI will provide insights and predictions, while humans interpret results and make final judgments.

This partnership creates more reliable and ethical decision systems.

By combining computational power with human understanding, organizations achieve better outcomes and maintain public trust.

Conclusion

Artificial intelligence has become a powerful tool for decision support across industries. However, automation alone cannot guarantee fairness, accuracy, or accountability.

Human oversight AI decisions provide the critical balance between machine efficiency and human judgment. Oversight helps detect bias, correct errors, and ensure ethical outcomes.

Organizations benefit from stronger governance, improved transparency, and greater public trust. Moreover, supervised AI systems reduce risks associated with fully automated decision making.

As artificial intelligence continues to evolve, human supervision will remain a cornerstone of responsible technology deployment. When organizations combine advanced algorithms with thoughtful oversight, they create decision systems that are both powerful and trustworthy.

FAQ

1. Why do AI systems require human supervision?

AI systems rely on data patterns and statistical models. Humans provide judgment, context, and ethical evaluation that algorithms cannot fully understand.

2. What is a human-in-the-loop AI system?

A human-in-the-loop system requires human approval before final decisions occur. The AI generates recommendations, but humans confirm or modify the outcome.

3. Can artificial intelligence operate without human monitoring?

Some automated systems run independently for short periods. However, long-term deployment requires monitoring to detect errors, bias, or unexpected behavior.

4. Which industries rely most on supervised AI systems?

Healthcare, finance, legal services, and government agencies rely heavily on supervised AI because decisions in these fields affect people’s lives directly.

5. How can organizations improve AI governance?

Organizations can improve governance by monitoring models continuously, auditing datasets, documenting systems clearly, and involving experts from multiple disciplines.