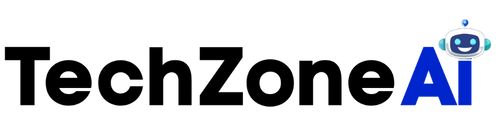

Artificial intelligence now shapes decisions in finance, healthcare, hiring, and security. However, many algorithms still operate as black boxes. As a result, organizations struggle to understand how automated systems reach conclusions. Explainable AI unbiased decisions help address this challenge by making machine learning models transparent and interpretable.

Transparency in AI improves fairness and accountability. When developers understand how algorithms operate, they can detect bias and prevent discrimination. Furthermore, organizations gain stronger public trust when they can explain automated outcomes clearly.

This article explores how explainable artificial intelligence improves decision fairness. It also explains the methods, benefits, and practical applications that help ensure responsible AI systems.

Why Transparency Matters in Artificial Intelligence

Artificial intelligence models process large datasets to identify patterns. These systems often outperform humans in speed and efficiency. However, complex models sometimes produce decisions that are difficult to interpret.

When organizations cannot explain AI outputs, several problems arise.

First, hidden bias may affect outcomes. Historical datasets sometimes contain discriminatory patterns. If models learn from these datasets, they may reproduce unfair decisions.

Second, regulators increasingly require transparency in automated systems. Industries such as healthcare and finance must justify algorithmic decisions.

Third, lack of transparency reduces trust among users and stakeholders.

Because of these issues, developers now prioritize explainable AI unbiased decisions in modern machine learning systems. Clear explanations allow organizations to detect unfair outcomes and correct them early.

Furthermore, transparent models improve collaboration between data scientists, regulators, and decision makers.

Understanding Explainable Artificial Intelligence

Explainable artificial intelligence focuses on making algorithms easier to interpret. Instead of producing opaque results, models provide insight into how predictions occur.

In practice, explainability techniques reveal which features influence decisions.

For example, a loan approval model may highlight factors such as income level, credit history, and debt ratio. These explanations help analysts verify whether the decision was reasonable.

Explainable AI unbiased decisions depend on two key components.

Interpretability allows humans to understand the model’s reasoning. Transparency ensures that decision logic remains visible during evaluation.

Together, these features allow organizations to detect unfair patterns in algorithmic predictions.

Additionally, explainable systems enable continuous monitoring. Teams can track model behavior over time and adjust when bias appears.

Sources of Bias in Machine Learning Models

Bias in artificial intelligence often originates from data or design choices.

Understanding these sources helps developers build fair systems.

Historical Data Bias

Many machine learning systems train on historical records. Unfortunately, historical data sometimes reflects social inequality.

For instance, hiring datasets may contain gender imbalances. If models learn from these datasets, they may favor certain candidates.

Therefore, explainable AI unbiased decisions help reveal how training data influences predictions.

Feature Selection Bias

Developers choose which variables to include in models. Sometimes these features correlate with protected attributes such as race or gender.

Even indirect correlations can lead to unfair outcomes.

Interpretability tools help analysts identify whether certain variables drive biased results.

Algorithmic Bias

Some algorithms amplify patterns within data. If datasets contain imbalances, the model may exaggerate them.

Explainable AI unbiased decisions enable teams to detect such patterns quickly.

Once identified, developers can adjust model parameters or retrain with balanced data.

Techniques Used to Explain AI Decisions

Modern explainability tools help analysts understand model behavior.

Several methods provide insights into how predictions occur.

Feature Importance Analysis

Feature importance techniques reveal which variables influence predictions.

For example, a model predicting loan risk may rely heavily on credit history and income.

By examining feature importance scores, analysts can determine whether decisions rely on fair attributes.

This analysis supports explainable AI unbiased decisions by identifying potential bias sources.

SHAP Values

SHAP, or Shapley Additive Explanations, measures how each feature contributes to a prediction.

The method assigns numerical values to each input variable. These values show how strongly a feature pushes the outcome in one direction.

SHAP provides clear visual explanations that data scientists and business leaders can interpret.

Because of this clarity, SHAP plays a major role in achieving explainable AI unbiased decisions.

LIME Interpretability

LIME stands for Local Interpretable Model-Agnostic Explanations.

This technique approximates complex models using simpler interpretable models.

It focuses on explaining individual predictions rather than the entire system.

As a result, analysts can examine why a particular decision occurred.

These localized insights support fairness audits and improve accountability.

Benefits of Explainability in AI Systems

Explainability delivers several advantages for organizations using artificial intelligence.

Transparency improves fairness, trust, and regulatory compliance.

Improved Fairness

Explainability helps teams identify unfair patterns in predictions.

Once detected, developers can modify training data or model parameters.

Consequently, explainable AI unbiased decisions reduce the risk of discriminatory outcomes.

Greater Trust from Users

Users often hesitate to trust automated decisions.

However, clear explanations increase confidence in AI systems.

When organizations explain how models reach conclusions, stakeholders feel more comfortable using these technologies.

Trust becomes especially important in healthcare, finance, and government services.

Regulatory Compliance

Many countries now regulate automated decision-making systems.

Regulations often require transparency in algorithmic processes.

Explainable AI unbiased decisions help organizations meet these requirements.

Companies can demonstrate how models operate and verify fairness during audits.

Better Model Improvement

Explainability tools also help data scientists refine models.

Understanding how models behave reveals weaknesses and performance gaps.

Teams can adjust feature selection, retrain models, or update algorithms.

These improvements increase both accuracy and fairness.

Real-World Applications of Explainable AI

Explainability now plays a critical role across multiple industries.

Organizations use transparent AI systems to ensure responsible automation.

Healthcare Diagnostics

Healthcare systems increasingly rely on AI for disease detection.

Doctors must understand why an algorithm recommends a diagnosis.

Explainable AI unbiased decisions allow clinicians to review contributing factors.

For example, medical imaging systems may highlight regions of interest within scans.

This transparency helps doctors validate results and maintain patient safety.

Financial Lending

Banks often use machine learning models to evaluate loan applications.

Regulators require financial institutions to justify lending decisions.

Explainability tools reveal which factors influenced approval or rejection.

Therefore, explainable AI unbiased decisions ensure lending practices remain fair and transparent.

Hiring and Recruitment

Companies sometimes use AI to screen job applicants.

However, biased hiring algorithms can harm workplace diversity.

Explainability helps HR teams verify whether models rely on fair evaluation criteria.

Organizations can adjust models if they detect discriminatory patterns.

Fraud Detection

Financial institutions also use AI to detect suspicious transactions.

Explainable systems help investigators understand why certain transactions trigger alerts.

Consequently, analysts can quickly confirm whether alerts represent genuine threats.

This process improves both efficiency and fairness in automated monitoring systems.

Challenges in Implementing Explainable AI

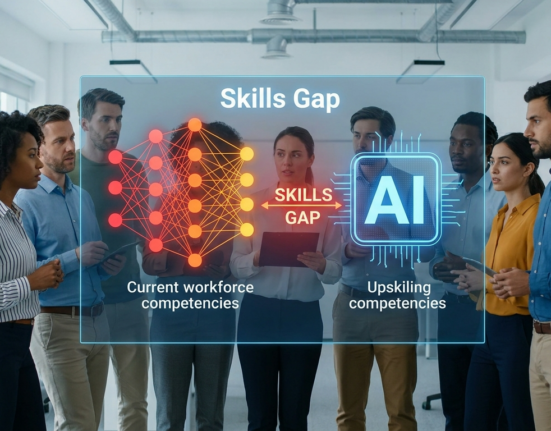

Despite its benefits, explainable AI still faces several challenges.

Complex models sometimes resist easy interpretation.

Accuracy Versus Interpretability

Highly accurate models often rely on deep neural networks.

These networks contain millions of parameters.

Unfortunately, interpreting such systems can be difficult.

Organizations must balance model performance with transparency.

Sometimes slightly simpler models provide better explainability.

Computational Overhead

Explainability tools sometimes increase computational requirements.

Techniques such as SHAP can require additional processing time.

However, advances in software optimization continue to reduce these limitations.

User Understanding

Even when explanations exist, stakeholders must interpret them correctly.

Clear visualizations and documentation help non-technical audiences understand model outputs.

Proper training ensures teams can apply explainability tools effectively.

Despite these challenges, explainable AI unbiased decisions remain essential for responsible AI adoption.

Best Practices for Building Transparent AI Systems

Organizations can follow several best practices when implementing explainable AI.

These strategies help ensure fairness and accountability.

Use Diverse Training Data

Balanced datasets reduce the risk of biased outcomes.

Data should represent diverse populations and scenarios.

Regular dataset reviews help maintain fairness over time.

Monitor Models Continuously

AI systems evolve as data changes.

Therefore, teams should monitor model behavior regularly.

Explainability tools help detect shifts that could introduce bias.

Document Model Decisions

Clear documentation improves accountability.

Organizations should record model design choices, training data sources, and evaluation metrics.

Documentation supports compliance and internal audits.

Combine Human Oversight with AI

Human supervision remains essential for responsible automation.

Experts should review critical decisions produced by AI systems.

Collaboration between humans and machines improves reliability and fairness.

Through these practices, organizations can maintain explainable AI unbiased decisions across their AI infrastructure.

Conclusion

Artificial intelligence continues to influence critical decisions across industries. However, opaque algorithms can create serious fairness concerns. Without transparency, organizations cannot detect or correct biased outcomes.

Explainable AI unbiased decisions provide a powerful solution. By revealing how algorithms reach conclusions, explainability tools improve accountability and trust.

Organizations benefit from improved fairness, stronger regulatory compliance, and better model performance. Furthermore, transparent systems allow developers to monitor and refine models over time.

Although challenges remain, explainable AI continues to evolve rapidly. As technology improves, transparency will become a standard requirement for responsible machine learning systems.

Ultimately, explainability ensures that artificial intelligence supports fair and ethical decision making in the modern digital world.

FAQ

1. What is explainable artificial intelligence?

Explainable artificial intelligence refers to methods that make machine learning decisions understandable. These methods reveal how models evaluate data and generate predictions.

2. Why is transparency important in AI systems?

Transparency helps detect bias and improves accountability. When decision logic becomes visible, organizations can verify fairness and maintain public trust.

3. How can companies reduce bias in machine learning models?

Companies can reduce bias by using diverse training data, monitoring model performance, and applying interpretability tools that reveal how predictions occur.

4. Which industries benefit most from explainable AI?

Healthcare, finance, hiring, and cybersecurity benefit significantly. These sectors require transparent systems because decisions often affect people directly.

5. Are interpretable models always less accurate?

Not necessarily. Some simpler models achieve competitive performance while remaining easier to interpret. In many cases, organizations balance accuracy with transparency.