AI decision-making fairness has become one of the most critical challenges in modern technology. Artificial intelligence now influences who gets hired, approved for loans, flagged for fraud, prioritized for healthcare, or targeted by marketing. These decisions shape lives, often quietly, and at massive scale.

When AI systems are unfair, the harm multiplies quickly. Small biases become systemic. Minor data flaws become widespread discrimination. As a result, fairness is no longer a philosophical concern. It is an operational requirement.

Ensuring AI decision-making fairness means building systems that treat individuals equitably, explain outcomes clearly, and remain accountable over time. This article explores why fairness matters, how bias emerges, and what organizations must do to create AI systems that deserve trust.

Why AI Decision-Making Fairness Matters Today

Automation amplifies impact. A single model can affect millions of decisions in seconds.

AI decision-making fairness matters because automated systems increasingly replace or guide human judgment. When those systems are biased, harm spreads faster than any individual decision-maker could cause.

Fairness is essential because:

- AI decisions affect real lives and opportunities

- Bias can scale invisibly

- Trust determines adoption and legitimacy

- Regulation increasingly demands accountability

Without fairness, AI systems risk reinforcing inequality rather than reducing it.

Understanding Bias in AI Decision-Making

Bias in AI rarely begins with bad intent. It usually starts with data.

AI models learn from historical information. That information reflects human behavior, social structures, and past decisions. If those patterns include inequality, models reproduce it faithfully.

Common sources of bias include:

- Imbalanced or unrepresentative datasets

- Proxy variables that encode sensitive traits

- Historical decisions shaped by discrimination

- Feedback loops that reinforce outcomes

AI decision-making fairness begins with recognizing bias as a systemic risk, not an edge case.

Problem Framing and Fairness

Fairness enters the system before the first line of code is written.

How a problem is framed determines what the model optimizes. If success is defined only by efficiency or accuracy, fairness is sidelined automatically.

Ensuring AI decision-making fairness requires asking early questions such as:

- Who is affected by this decision?

- What harm could result from errors?

- Which outcomes matter beyond accuracy?

- When should human review be required?

Thoughtful framing reduces downstream bias dramatically.

Data Practices That Support AI Decision-Making Fairness

Fair systems require fair data.

Data collection, preparation, and documentation shape model behavior. Without careful practices, bias becomes baked in.

Strong data practices include:

- Collecting representative samples

- Auditing datasets for imbalance

- Documenting data sources and limitations

- Managing sensitive attributes carefully

Transparency in data enables meaningful fairness evaluation.

Measuring Fairness in AI Decision-Making

Fairness must be measured to be managed.

Accuracy alone does not reveal whether outcomes differ across groups. AI decision-making fairness requires context-specific metrics.

Common approaches include:

- Comparing error rates across demographics

- Measuring disparate impact

- Testing outcomes under simulated scenarios

- Reviewing edge cases manually

Fairness evaluation must continue throughout the system lifecycle.

Transparency and Explainability as Fairness Enablers

Opaque systems undermine fairness.

People affected by AI decisions deserve explanations. Regulators increasingly require them. Explainability supports accountability and correction.

AI decision-making fairness improves when systems provide:

- Interpretable outputs

- Clear decision logic documentation

- User-friendly explanations

- Accessible appeal mechanisms

Transparency allows unfair outcomes to be identified and addressed.

Human Oversight in Fair AI Systems

Automation should not eliminate accountability.

Human oversight plays a critical role in ensuring AI decision-making fairness, especially in high-impact or ambiguous cases.

Human-in-the-loop designs support:

- Review of uncertain decisions

- Escalation of ethical concerns

- Continuous learning from outcomes

- Preservation of responsibility

Shared control reduces blind reliance on automation.

Model Design Choices and Fairness Trade-Offs

Model selection affects fairness.

Highly complex models may improve accuracy while reducing interpretability. Simpler models may be easier to audit but less precise.

Ensuring AI decision-making fairness involves:

- Matching model complexity to risk level

- Stress-testing models for bias

- Avoiding unnecessary complexity

- Validating assumptions regularly

Design choices reflect ethical priorities.

Monitoring Fairness in Production Systems

Fairness is not static.

Data changes. User behavior evolves. Models drift. Bias can reappear even after careful development.

Ongoing fairness monitoring includes:

- Tracking performance across groups

- Detecting data and concept drift

- Reviewing outcomes regularly

- Updating models responsibly

AI decision-making fairness is a continuous commitment.

Governance and Accountability Structures

Fairness requires ownership.

Without governance, ethical concerns fade under operational pressure. Clear responsibility ensures issues are addressed.

Effective governance supports AI decision-making fairness through:

- Defined ethical guidelines

- Cross-functional oversight committees

- Documented decision processes

- Clear remediation paths

Governance turns values into practice.

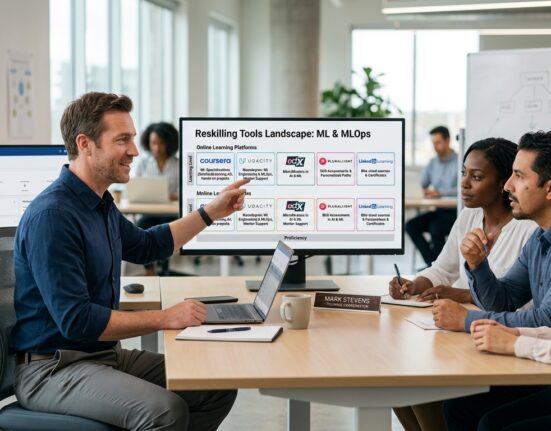

Regulation and Fair AI Decision-Making

Regulation is accelerating globally.

Many AI laws reflect fairness principles directly. Organizations that prioritize fairness are better prepared for compliance.

Alignment includes:

- Proactive risk assessments

- Transparent documentation

- Ongoing audit readiness

- Consistent monitoring practices

Fairness Across Different AI Use Cases

Healthcare prioritizes safety and equity. Finance emphasizes nondiscrimination. Public sector systems demand accountability. Retail focuses on consent and transparency.

AI decision-making fairness must adapt to industry-specific risks while maintaining core ethical principles.

Context determines how fairness is applied, not whether it matters.

Balancing Innovation and Fairness

Speed pressures teams to move fast. Fairness asks teams to slow down.

This tension is real but manageable. Ethical safeguards prevent costly rework and reputational damage later.

Balanced approaches include:

- Responsible experimentation

- Gradual deployment

- Early stakeholder engagement

- Continuous evaluation

Fairness enables sustainable innovation rather than blocking it.

The Cost of Ignoring AI Decision-Making Fairness

Ignoring fairness is expensive.

Consequences include legal action, regulatory fines, public backlash, and loss of trust. Recovery takes years.

Organizations that neglect AI decision-making fairness often pay far more than those that invest early in ethical design.

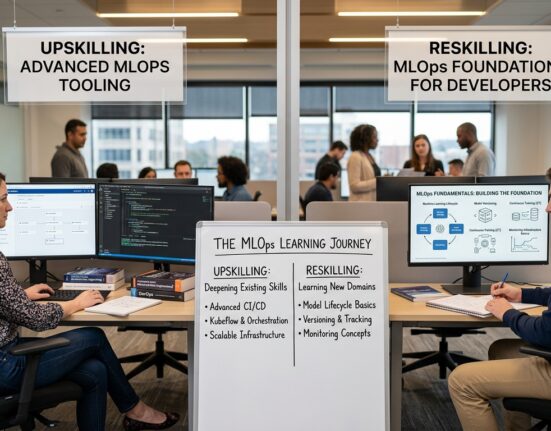

Building a Culture That Supports Fair AI

Fairness cannot be enforced by policy alone.

Teams must feel empowered to question outcomes and raise concerns. Leadership must model ethical behavior.

Cultural support includes:

- Fairness training and awareness

- Psychological safety

- Transparent communication

- Incentives for responsible behavior

Culture sustains fairness under pressure.

AI Decision-Making Fairness as a Strategic Advantage

Trust differentiates organizations.

Companies known for fair AI attract customers, partners, and talent. Trust compounds over time.

AI decision-making fairness delivers:

- Stronger reputation

- Higher adoption rates

- Lower regulatory risk

- Long-term resilience

Fairness becomes a competitive asset.

Conclusion

AI decision-making fairness is essential for building ethical, trustworthy, and sustainable AI systems. By addressing bias early, prioritizing transparency, maintaining human oversight, and embedding accountability, organizations protect both people and value.

As AI continues to influence critical decisions, fairness must remain central. Systems that treat people equitably earn trust, adapt responsibly, and endure. In the future of AI, fairness is not optional. It is foundational.

FAQ

1. What is AI decision-making fairness?

It refers to designing AI systems that treat individuals and groups equitably while avoiding unjust bias.

2. Why is fairness important in AI systems?

Because AI decisions scale rapidly and can cause widespread harm if biased.

3. Can AI ever be completely fair?

Perfect fairness is unlikely, but bias can be reduced significantly through careful design and monitoring.

4. How is fairness measured in AI decision-making?

Through fairness metrics, group comparisons, scenario testing, and ongoing evaluation.

5. Who is responsible for ensuring AI fairness?

Responsibility is shared across data scientists, engineers, leadership, and governance teams.