Artificial intelligence increasingly influences decisions across industries. From financial approvals to healthcare diagnostics, automated systems analyze data and provide recommendations. However, as AI systems gain authority, organizations must ensure responsible oversight. Therefore, AI decision-making accountability has become a critical requirement for modern technology governance.

Accountability ensures that organizations understand how AI systems reach conclusions. It also clarifies who remains responsible for decisions influenced by machine learning.

Without clear accountability structures, automated systems could introduce bias, errors, or ethical concerns.

Consequently, organizations must design frameworks that monitor AI behavior, track decision logic, and maintain human oversight.

Moreover, responsible governance builds trust among users, regulators, and stakeholders.

As artificial intelligence continues expanding into critical sectors, AI decision-making accountability plays a vital role in ensuring technology remains safe, transparent, and beneficial.

Understanding Accountability in Artificial Intelligence

Accountability refers to the responsibility for actions and decisions within a system. In traditional organizations, humans make decisions and accept responsibility for outcomes.

However, AI systems complicate this model because algorithms analyze data and generate automated recommendations.

Therefore, AI decision-making accountability requires clear mechanisms that connect automated outputs to responsible individuals or teams.

These mechanisms often include monitoring tools, governance frameworks, and auditing procedures.

Additionally, accountability involves documenting how models function and why they produce specific results.

Organizations must ensure that automated systems remain explainable and traceable.

By implementing accountability practices, companies ensure that artificial intelligence operates responsibly within business and social environments.

Furthermore, accountability strengthens trust in AI technologies.

Why Accountability Matters in AI Systems

As organizations rely more heavily on automated systems, the impact of AI-driven decisions increases.

Consequently, AI decision-making accountability ensures that these decisions remain fair, reliable, and transparent.

First, accountability helps identify errors in AI systems.

If a model produces inaccurate results, monitoring mechanisms detect the issue quickly.

Second, accountability prevents ethical risks.

Automated systems sometimes reflect biases within training data. Oversight frameworks help detect and correct these problems.

Third, accountability improves regulatory compliance.

Many industries must meet strict governance standards when deploying artificial intelligence.

Finally, accountability strengthens public trust.

Users feel more confident when organizations demonstrate responsible AI management.

Therefore, businesses increasingly prioritize governance frameworks that support transparent AI decision processes.

Key Principles of Responsible AI Accountability

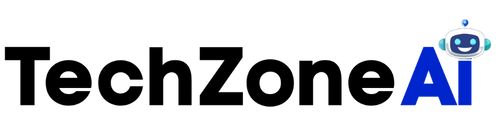

Organizations designing AI decision-making accountability frameworks must follow several important principles.

These principles guide responsible development and deployment of automated systems.

Transparency

Transparency allows stakeholders to understand how AI systems operate.

Developers should document model design, training data sources, and decision logic.

Clear documentation ensures that organizations maintain visibility into automated processes.

Transparency also supports regulatory compliance and internal audits.

Explainability

Explainability enables organizations to interpret AI outcomes.

Many modern machine learning models are complex, making explanations difficult.

However, explainable AI tools help engineers analyze model predictions.

These tools support effective AI decision-making accountability by revealing the reasoning behind automated results.

Human Oversight

Human supervision remains essential in automated environments.

Although AI systems process data efficiently, humans must evaluate critical decisions.

Organizations often implement human-in-the-loop systems that allow experts to review AI outputs.

This approach ensures responsible decision-making in high-risk applications.

Traceability

Traceability ensures that organizations can track how AI systems generate outcomes.

Logging systems record model inputs, outputs, and operational conditions.

These records enable auditing and investigation when issues arise.

Traceability strengthens accountability by linking decisions to specific system actions.

Building Organizational Accountability Frameworks

Implementing AI decision-making accountability requires coordinated efforts across technical and management teams.

Organizations must establish policies that define how AI systems operate within governance structures.

First, leadership teams should develop clear AI governance guidelines.

These guidelines outline responsibilities for developers, engineers, and business leaders.

Second, companies must implement monitoring tools that track model performance.

Monitoring systems detect anomalies and performance changes over time.

Third, organizations should conduct regular audits of AI systems.

Audits evaluate data sources, model behavior, and compliance with ethical standards.

Finally, training programs educate employees about responsible AI practices.

These programs help staff understand how automated systems influence decision-making processes.

Through structured governance frameworks, organizations strengthen accountability and reduce operational risks.

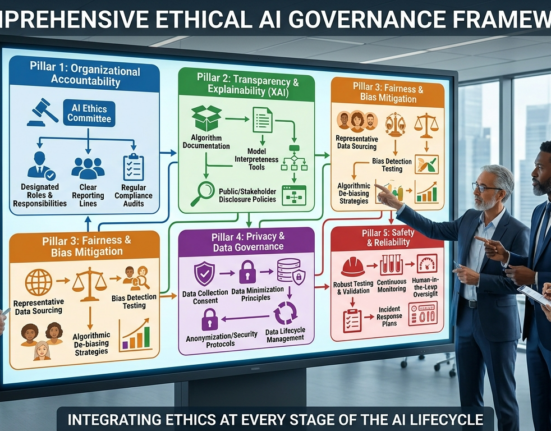

Technologies Supporting AI Accountability

Modern technologies play an important role in implementing AI decision-making accountability across organizations.

These tools help monitor model performance and ensure transparency.

Model Monitoring Platforms

Monitoring platforms track how machine learning models perform in production environments.

These tools analyze prediction accuracy, data drift, and operational metrics.

Continuous monitoring allows engineers to detect issues quickly.

As a result, organizations maintain reliable AI systems.

Explainable AI Tools

Explainable AI technologies analyze model behavior and identify important decision factors.

Visualization tools help engineers understand how models interpret data.

These insights support transparency and strengthen AI decision-making accountability.

Explainability tools also help organizations communicate AI behavior to regulators and stakeholders.

Data Governance Systems

Data governance systems manage the lifecycle of training datasets.

These platforms track data sources, transformations, and quality metrics.

Strong data governance ensures that machine learning models rely on reliable information.

Reliable data supports ethical and accountable AI systems.

Audit and Compliance Platforms

Audit platforms document system behavior and maintain records of AI decisions.

These records support regulatory reporting and internal reviews.

Organizations use these tools to verify compliance with governance standards.

Challenges in Achieving AI Accountability

Although accountability frameworks provide significant benefits, organizations may face challenges during implementation.

Achieving effective AI decision-making accountability requires technical expertise and organizational commitment.

One major challenge involves model complexity.

Advanced machine learning algorithms often function as black boxes, making explanations difficult.

Another challenge involves data quality.

If training data contains biases or errors, AI systems may produce unfair results.

Organizations must also address operational challenges.

Maintaining monitoring systems and governance processes requires ongoing resources.

Additionally, regulatory requirements continue evolving.

Companies must adapt governance frameworks to comply with new policies.

Despite these challenges, responsible AI governance remains essential for long-term success.

Industry Applications of AI Accountability

Many industries rely on AI decision-making accountability to ensure responsible automation.

Financial institutions use AI systems to evaluate loan applications and detect fraud.

Accountability frameworks ensure that these decisions remain fair and compliant with regulations.

Healthcare organizations deploy machine learning models to assist medical diagnosis.

Transparent governance helps doctors understand AI recommendations.

Retail companies also use AI systems to personalize customer experiences.

Accountability practices ensure that recommendation algorithms operate ethically.

Additionally, public sector organizations use AI technologies in policy analysis and resource planning.

Governance frameworks maintain transparency in these high-impact applications.

Across industries, responsible AI oversight ensures that automation benefits society.

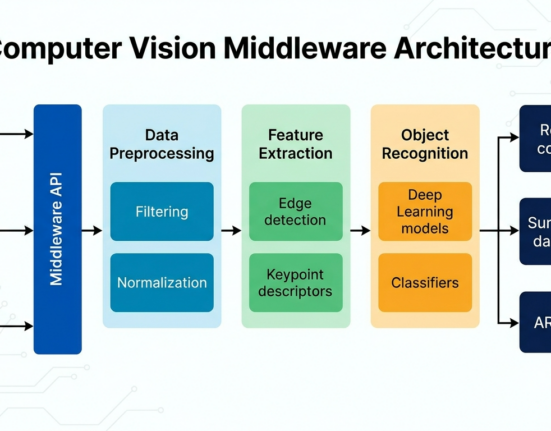

Future Trends in AI Governance

Artificial intelligence governance continues evolving as technology advances.

Several emerging trends will shape AI decision-making accountability in the coming years.

One important development involves global AI regulations.

Governments worldwide are establishing policies that require transparency and ethical oversight.

Another trend involves automated auditing tools.

These tools analyze AI behavior continuously and identify potential risks.

Additionally, organizations are adopting responsible AI frameworks that integrate governance into development processes.

Ethical AI design now begins during model creation rather than after deployment.

Finally, interdisciplinary collaboration is increasing.

Experts from technology, law, and ethics work together to design accountable AI systems.

These developments will strengthen governance frameworks for automated decision systems.

Conclusion

Artificial intelligence offers remarkable opportunities to improve efficiency, accuracy, and decision-making across industries. However, as automated systems influence more aspects of society, organizations must ensure responsible oversight.

Through effective AI decision-making accountability, companies maintain transparency, fairness, and trust in automated systems.

Accountability frameworks combine governance policies, monitoring technologies, and human oversight.

These elements ensure that artificial intelligence operates within ethical and regulatory boundaries.

Although implementing accountability requires effort, the benefits are substantial.

Organizations that prioritize responsible AI practices reduce risks and build stronger relationships with customers, regulators, and stakeholders.

As AI technologies continue evolving, accountability will remain a cornerstone of sustainable and trustworthy artificial intelligence systems.

FAQ

1. Why is accountability important in automated systems?

Accountability ensures that organizations remain responsible for decisions influenced by artificial intelligence technologies.

2. How can companies make AI decisions more transparent?

Organizations use explainable AI tools, documentation, and monitoring systems to clarify how algorithms generate results.

3. What role do humans play in AI governance?

Human oversight ensures that automated recommendations are reviewed before critical decisions occur.

4. Which industries require strong AI governance frameworks?

Finance, healthcare, government, and retail industries often implement strict oversight for automated systems.

5. Can organizations audit artificial intelligence systems?

Yes. Many companies use monitoring and audit platforms to track system behavior and verify compliance with regulations.