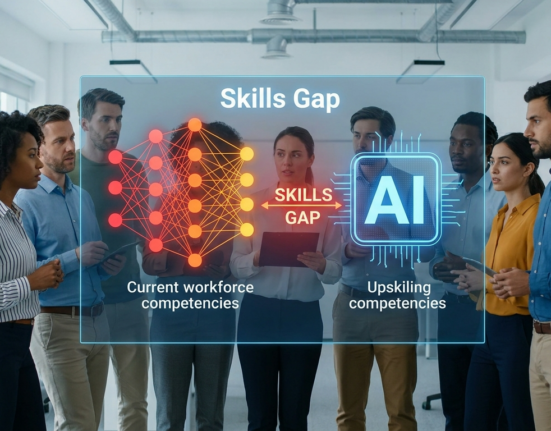

Artificial intelligence increasingly influences decisions in finance, healthcare, hiring, and public services. While these systems promise efficiency and accuracy, they also raise concerns about fairness. Consequently, AI bias detection has become a critical focus for organizations deploying machine learning models.

Algorithms often learn patterns from historical data. However, if that data contains unfair or unbalanced information, models may produce biased outcomes. Therefore, organizations must evaluate their systems carefully before relying on automated decisions.

Moreover, identifying bias helps build trust among users and stakeholders. Transparent evaluation ensures that AI systems treat individuals fairly regardless of background.

Although bias can be difficult to detect, organizations can implement structured methods to analyze algorithm performance.

In this guide, you will learn how AI bias detection works, why it matters, and which strategies help teams identify and reduce unfair outcomes in AI systems.

Understanding Bias in Artificial Intelligence

Bias occurs when algorithms produce systematically unfair results for certain groups of people. In many cases, this problem arises from the data used to train machine learning models.

Historical datasets often reflect past inequalities. Consequently, algorithms may replicate those patterns when making predictions.

Through effective AI bias detection, organizations can identify these patterns before they cause harm.

Bias may appear in several forms. For example, a hiring algorithm may favor candidates from specific schools or backgrounds. Similarly, financial risk models may treat demographic groups differently.

These outcomes may not be intentional. Nevertheless, they still require correction.

Additionally, bias can emerge during model design or feature selection. Engineers must therefore analyze each stage of the machine learning lifecycle.

Understanding how bias develops allows organizations to build fairer and more responsible AI systems.

Why Bias Detection Matters in AI Systems

Artificial intelligence now influences decisions that affect people’s lives. Therefore, fairness and accountability have become essential priorities.

Organizations that invest in AI bias detection protect both their users and their reputations.

First, bias detection improves fairness. Identifying unfair patterns ensures that algorithms treat individuals equally.

Second, it supports regulatory compliance. Many governments now require organizations to monitor AI systems for discrimination.

Third, fairness increases public trust. Transparent systems encourage users to adopt AI technologies confidently.

Finally, addressing bias improves model performance. Balanced datasets often produce more reliable predictions.

Because of these benefits, bias detection should become a standard practice in AI development.

Common Sources of Bias in Machine Learning

Before teams can address bias, they must understand where it originates. Several factors commonly contribute to unfair outcomes.

Training Data Imbalance

Machine learning models rely heavily on training data.

If certain groups appear more frequently in the dataset, the model may prioritize those patterns. As a result, predictions may favor one group over another.

Effective AI bias detection involves evaluating whether training datasets represent diverse populations accurately.

Balanced datasets help ensure that algorithms learn from a broad range of examples.

Feature Selection Issues

Features represent the variables used to train machine learning models.

Sometimes features indirectly encode sensitive attributes such as gender, ethnicity, or socioeconomic status.

Even when these variables are removed, related data may still introduce bias.

Therefore, engineers must carefully evaluate which features influence model predictions.

Algorithm Design Choices

Different machine learning algorithms behave differently when processing data.

Some algorithms may amplify small biases within datasets.

Consequently, teams must analyze algorithm performance carefully during model evaluation.

Through structured AI bias detection, engineers can compare models and identify fairness concerns.

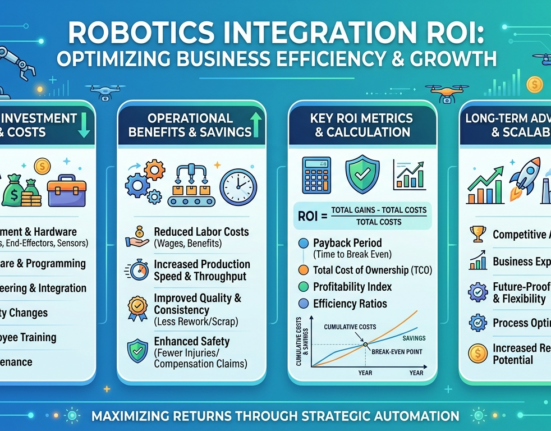

Human Decision Bias

Bias can also originate from human choices during model development.

For example, engineers may unintentionally prioritize certain performance metrics over fairness considerations.

Therefore, organizations should include diverse perspectives during AI development processes.

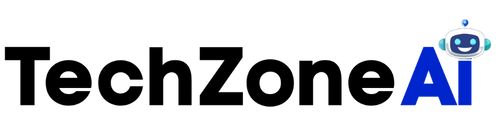

Methods for Detecting Bias in AI Systems

Organizations use several techniques to identify bias in machine learning models.

These methods form the foundation of effective AI bias detection strategies.

Statistical Fairness Metrics

Fairness metrics measure how algorithms treat different groups.

For instance, statistical parity evaluates whether predictions occur equally across groups.

Another metric, equal opportunity, measures whether models treat groups equally when predicting positive outcomes.

These metrics help engineers quantify fairness in algorithm decisions.

Model Performance Comparisons

Comparing model performance across demographic groups can reveal hidden bias.

For example, engineers may analyze error rates for different populations.

If error rates differ significantly, the model may require adjustment.

Regular evaluation ensures ongoing AI bias detection throughout the AI lifecycle.

Data Auditing

Data auditing examines datasets before model training begins.

Teams analyze data distributions, labeling practices, and potential imbalances.

By addressing issues early, organizations prevent bias from entering machine learning systems.

Data auditing also improves overall dataset quality.

Algorithm Audits and Testing

Independent audits provide additional oversight for AI systems.

External reviewers examine model behavior and test outcomes across multiple scenarios.

This process helps organizations verify fairness and transparency.

Auditing has become a key component of modern AI bias detection practices.

Tools Supporting Bias Detection in AI

Several tools help organizations evaluate fairness in machine learning systems.

These platforms simplify AI bias detection processes for engineering teams.

Fairness Evaluation Libraries

Open-source libraries provide frameworks for analyzing algorithm fairness.

These tools measure fairness metrics, compare group outcomes, and identify performance gaps.

Engineers can integrate these libraries directly into machine learning workflows.

As a result, fairness evaluation becomes part of the model development process.

Data Visualization Platforms

Visualization tools help teams understand dataset patterns.

Charts and graphs reveal imbalances across demographic categories.

Visual analysis often makes bias easier to identify during early development stages.

These tools support continuous AI bias detection during dataset preparation.

Model Monitoring Systems

AI systems often evolve after deployment.

Therefore, organizations must monitor models continuously.

Monitoring platforms track prediction outcomes and detect changes in behavior.

When bias appears over time, teams can respond quickly.

Automated Risk Assessment Tools

Some platforms automatically evaluate AI systems for ethical risks.

These tools analyze datasets, model outputs, and performance metrics.

Automated assessments support proactive AI bias detection and governance.

Strategies to Reduce Bias in AI Models

Detecting bias is only the first step. Organizations must also implement strategies to address fairness issues.

Several approaches help reduce bias while maintaining model performance.

Improve Dataset Diversity

Expanding dataset diversity often reduces algorithm bias.

Including data from multiple demographic groups improves representation.

Consequently, models learn patterns that reflect broader populations.

Adjust Training Techniques

Machine learning training techniques can reduce unfair outcomes.

For example, reweighting methods adjust dataset importance for underrepresented groups.

These techniques balance model learning processes.

Use Fairness Constraints

Some algorithms allow developers to include fairness constraints during training.

These constraints ensure models prioritize both accuracy and fairness.

Although trade-offs may occur, balanced models often produce better long-term outcomes.

Implement Human Oversight

Human review remains essential for responsible AI systems.

Experts can analyze algorithm decisions and identify potential bias.

Combining human judgment with automated AI bias detection creates stronger governance frameworks.

Future Trends in Bias Detection Technology

Artificial intelligence continues to evolve rapidly. Consequently, new methods for AI bias detection are emerging.

One important trend involves explainable AI.

These systems provide transparency into how algorithms make decisions. As a result, engineers can identify biased reasoning patterns more easily.

Another development includes regulatory frameworks for responsible AI.

Governments increasingly require organizations to audit and monitor automated decision systems.

Additionally, automated fairness monitoring tools are becoming more sophisticated.

These systems analyze model performance continuously and alert teams to fairness risks.

Collaborative governance models also play an increasing role.

Organizations combine expertise from data scientists, ethicists, and policymakers to oversee AI development.

These multidisciplinary approaches strengthen fairness and accountability.

Conclusion

Artificial intelligence offers enormous benefits for organizations and society. However, ensuring fairness remains essential for responsible innovation.

By implementing AI bias detection, organizations can identify and correct unfair algorithm behavior before deployment.

Structured evaluation methods, fairness metrics, and monitoring tools help engineers analyze machine learning systems carefully.

Moreover, improving dataset diversity and incorporating human oversight further strengthens ethical AI practices.

Organizations that prioritize fairness not only protect users but also build trust in artificial intelligence technologies.

As AI continues to expand across industries, effective bias detection will remain a cornerstone of responsible machine learning development.

FAQ

1. What causes unfair outcomes in machine learning models?

Unfair outcomes often result from imbalanced training data, biased features, or design choices during model development.

2. How can organizations evaluate fairness in AI systems?

Teams often use statistical fairness metrics, dataset analysis, and performance comparisons across demographic groups.

3. Are there tools that help evaluate algorithm fairness?

Yes. Many open-source frameworks and AI governance platforms help developers analyze datasets and model predictions for fairness concerns.

4. Can bias appear after AI systems are deployed?

Yes. Changes in real-world data can introduce new biases. Continuous monitoring helps detect these issues over time.

5. Why is fairness important in automated decision systems?

Fairness ensures that algorithms treat individuals equally and prevents discrimination in important decisions such as hiring, finance, or healthcare.