Artificial intelligence increasingly shapes decisions in business, healthcare, finance, and public services. Because algorithms influence critical outcomes, organizations must ensure their systems operate responsibly. Therefore, AI algorithm transparency has become one of the most important principles in modern artificial intelligence development.

Transparent algorithms allow developers, regulators, and users to understand how automated systems make decisions. Without this clarity, AI systems may produce results that are difficult to explain or verify.

Furthermore, transparency helps organizations identify potential risks before they affect users. When engineers understand how models operate, they can detect errors, improve performance, and reduce unintended consequences.

In addition, open and understandable systems build trust among customers and stakeholders.

As artificial intelligence continues to expand across industries, AI algorithm transparency will remain a central requirement for ethical and reliable technology.

Understanding Transparency in Artificial Intelligence

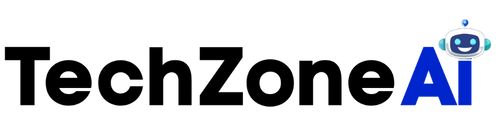

Transparency in AI refers to the ability to understand how algorithms process data and produce results. Instead of functioning as mysterious black boxes, transparent systems provide insight into their internal logic.

Through effective AI algorithm transparency, organizations can trace how inputs lead to specific outputs.

This visibility allows developers to evaluate whether models behave fairly and consistently. Moreover, transparency improves communication between technical teams and non-technical stakeholders.

For example, healthcare professionals using diagnostic AI tools must understand how models reach medical conclusions. Similarly, financial institutions must explain automated lending decisions.

Consequently, transparency ensures AI systems remain accountable to the people who use them.

Additionally, open algorithmic processes support better collaboration across teams. Engineers, analysts, and decision-makers can work together to refine models and improve outcomes.

Because modern AI systems often involve complex data pipelines, maintaining transparency throughout development is essential.

Why Transparency Builds Trust in AI Systems

Trust plays a crucial role in the adoption of artificial intelligence technologies. When users understand how AI works, they feel more confident relying on automated decisions.

Therefore, organizations prioritize AI algorithm transparency to strengthen user confidence.

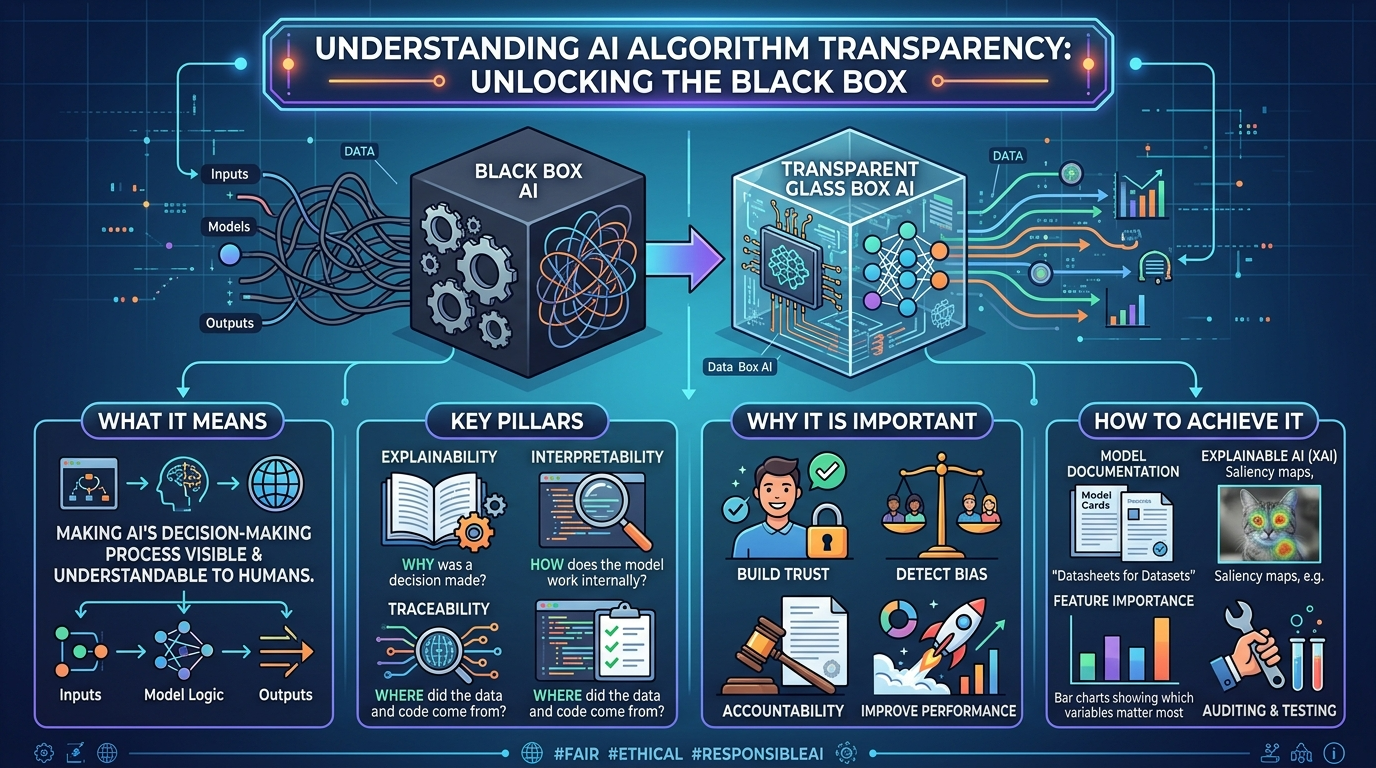

First, transparency enables accountability. If a system produces unexpected results, engineers can investigate the underlying processes.

Second, openness encourages responsible development practices. Developers must document model design, training data sources, and evaluation methods.

Third, transparency supports regulatory compliance. Many governments require organizations to explain automated decisions that affect individuals.

Finally, clear explanations help users understand how AI systems impact them.

As a result, transparent AI systems foster stronger relationships between technology providers and the public.

Key Challenges to Achieving Transparent AI

Although transparency offers many benefits, implementing it can be difficult. Modern machine learning models often involve complex architectures and large datasets.

These complexities create challenges for achieving effective AI algorithm transparency.

Complex Machine Learning Models

Deep learning systems contain millions of parameters. Because of this complexity, interpreting model behavior can be challenging.

Engineers may struggle to explain why certain predictions occur.

Nevertheless, transparency tools can help analyze model outputs and identify decision patterns.

Limited Documentation

Some AI projects lack sufficient documentation during development.

Without clear records of training data sources or model changes, explaining system behavior becomes difficult.

Organizations must maintain thorough documentation to support AI algorithm transparency.

Proprietary Algorithms

Many companies treat AI systems as proprietary technology.

While protecting intellectual property is important, excessive secrecy can limit transparency.

Balancing business interests with responsible AI practices remains an ongoing challenge.

Data Privacy Constraints

In certain cases, organizations cannot reveal specific data used to train models due to privacy regulations.

Therefore, developers must design transparency strategies that protect sensitive information while explaining algorithm behavior.

Methods for Improving AI Transparency

Organizations can implement several techniques to improve AI algorithm transparency within their systems.

These strategies help developers explain and evaluate machine learning models effectively.

Explainable AI Techniques

Explainable AI focuses on interpreting how algorithms produce predictions.

These methods analyze model behavior and highlight the factors influencing outcomes.

For example, feature importance analysis identifies which variables contribute most to predictions.

Such techniques help engineers understand model logic and support transparency goals.

Model Documentation

Comprehensive documentation improves transparency across the AI lifecycle.

Developers should record training datasets, algorithm choices, evaluation metrics, and system limitations.

Clear documentation strengthens AI algorithm transparency by providing stakeholders with accessible information.

Additionally, documentation helps teams maintain consistent standards when updating models.

Algorithm Audits

Independent audits provide external evaluation of AI systems.

Auditors review datasets, model design, and prediction outcomes.

Through these reviews, organizations identify potential risks and verify fairness.

Regular auditing supports reliable AI algorithm transparency practices.

Visualization Tools

Data visualization tools help explain algorithm behavior through charts and graphs.

These visualizations reveal patterns in model predictions and highlight potential biases.

As a result, engineers can communicate complex AI processes more clearly to stakeholders.

Benefits of Transparent AI Systems

Organizations that prioritize AI algorithm transparency gain several advantages.

These benefits extend beyond ethical considerations and contribute to stronger business performance.

Improved Model Reliability

Transparent models are easier to test and refine.

Engineers can identify weaknesses, correct errors, and optimize performance.

Consequently, AI systems become more reliable and accurate.

Stronger Regulatory Compliance

Governments increasingly regulate artificial intelligence technologies.

Transparency helps organizations demonstrate compliance with emerging AI governance frameworks.

Clear documentation and explainable systems simplify regulatory reporting requirements.

Better Risk Management

Transparent systems allow organizations to detect risks earlier.

For example, engineers can identify potential bias, data errors, or security vulnerabilities.

Proactive monitoring improves overall system stability.

Greater User Confidence

Users feel more comfortable adopting AI technologies when they understand how decisions are made.

By prioritizing AI algorithm transparency, organizations create technology that people trust.

This trust encourages broader adoption of artificial intelligence across industries.

Transparency in Different AI Applications

Artificial intelligence affects many industries. Consequently, AI algorithm transparency plays a crucial role in multiple sectors.

Healthcare

Medical AI systems assist doctors in diagnosing diseases and recommending treatments.

Because healthcare decisions directly affect patient safety, transparency is essential.

Clinicians must understand how algorithms analyze medical images or patient data.

Clear explanations support responsible medical decision-making.

Finance

Financial institutions use AI for credit scoring, fraud detection, and risk analysis.

Transparency ensures that automated decisions treat customers fairly.

Furthermore, regulators often require financial organizations to explain algorithm-driven outcomes.

Human Resources

Companies increasingly rely on AI for hiring and candidate screening.

Transparent hiring systems help organizations avoid discriminatory practices.

Employers can also explain recruitment decisions more effectively.

Public Services

Governments use AI to allocate resources, manage infrastructure, and analyze public data.

Transparent systems ensure accountability when automated decisions affect citizens.

Therefore, transparency remains a cornerstone of responsible public sector AI.

Future Trends in Transparent AI Development

The field of artificial intelligence continues to evolve rapidly. As technologies advance, new methods will enhance AI algorithm transparency.

One important trend involves explainable deep learning models. Researchers are developing tools that reveal how neural networks process information.

Another development focuses on standardized AI governance frameworks.

International organizations and governments are establishing guidelines for responsible AI development.

Additionally, automated monitoring systems are becoming more sophisticated.

These platforms track model performance and detect anomalies in real time.

Open research initiatives also contribute to transparency improvements. Academic collaborations help develop new techniques for interpreting machine learning systems.

As these innovations progress, transparency will become a standard requirement for AI systems worldwide.

Conclusion

Artificial intelligence offers powerful capabilities that transform industries and improve decision-making. However, responsible AI development requires transparency and accountability.

By prioritizing AI algorithm transparency, organizations can build systems that users understand and trust.

Transparent algorithms allow engineers to evaluate fairness, improve reliability, and detect potential risks. Additionally, open development practices support regulatory compliance and ethical governance.

Although achieving transparency may require additional effort, the benefits are significant.

Organizations that embrace transparent AI practices create technology that is not only powerful but also responsible and trustworthy.

As artificial intelligence continues to expand, transparency will remain essential for ensuring that automated systems serve society fairly and effectively.

FAQ

1. What does transparency mean in artificial intelligence systems?

Transparency refers to the ability to understand how AI models process data and generate predictions.

2. Why is explainability important for automated decision systems?

Explainability allows developers and users to understand how models reach conclusions, which helps build trust and accountability.

3. How can companies make AI systems easier to understand?

Organizations often use explainable AI tools, model documentation, and algorithm audits to clarify how systems operate.

4. Are transparent AI systems more reliable?

Yes. Systems that provide clear insights into their behavior are easier to test, monitor, and improve.

5. Do governments regulate automated decision technologies?

Many governments are developing regulations that require organizations to explain and monitor AI systems used in critical decisions.