Artificial intelligence is transforming how organizations make decisions. Businesses now rely on algorithms to analyze data, automate processes, and predict outcomes. However, as AI systems influence critical choices, companies must recognize the potential dangers involved. Understanding AI decision making risks has become essential for organizations that want to deploy artificial intelligence responsibly.

AI-driven systems can deliver remarkable efficiency. Yet they can also introduce challenges such as bias, data inaccuracies, and lack of transparency. If organizations fail to address these issues, automated decisions may produce harmful consequences.

Because of this reality, businesses must develop strategies to evaluate and control AI decision making risks.

Effective risk management allows companies to benefit from artificial intelligence while protecting customers, employees, and stakeholders.

Through proper governance, transparency, and testing, organizations can build trustworthy AI systems that support better decision-making outcomes.

Why AI Decision Systems Create New Risks

Artificial intelligence systems operate differently from traditional software. Instead of following simple rules, machine learning models learn patterns from large datasets.

This ability enables powerful automation. However, it also introduces complex AI decision making risks.

One common issue involves biased training data. If datasets reflect historical inequalities, AI systems may replicate those patterns.

For example, hiring algorithms trained on biased historical data may unintentionally favor certain candidates.

Another risk involves model opacity. Many advanced machine learning models function as “black boxes.” Their decision processes may be difficult to interpret.

Consequently, organizations struggle to explain why certain decisions occur.

Data quality also plays a critical role.

Incomplete or inaccurate datasets can lead to unreliable predictions.

Therefore, managing AI decision making risks requires careful oversight of both data and algorithms.

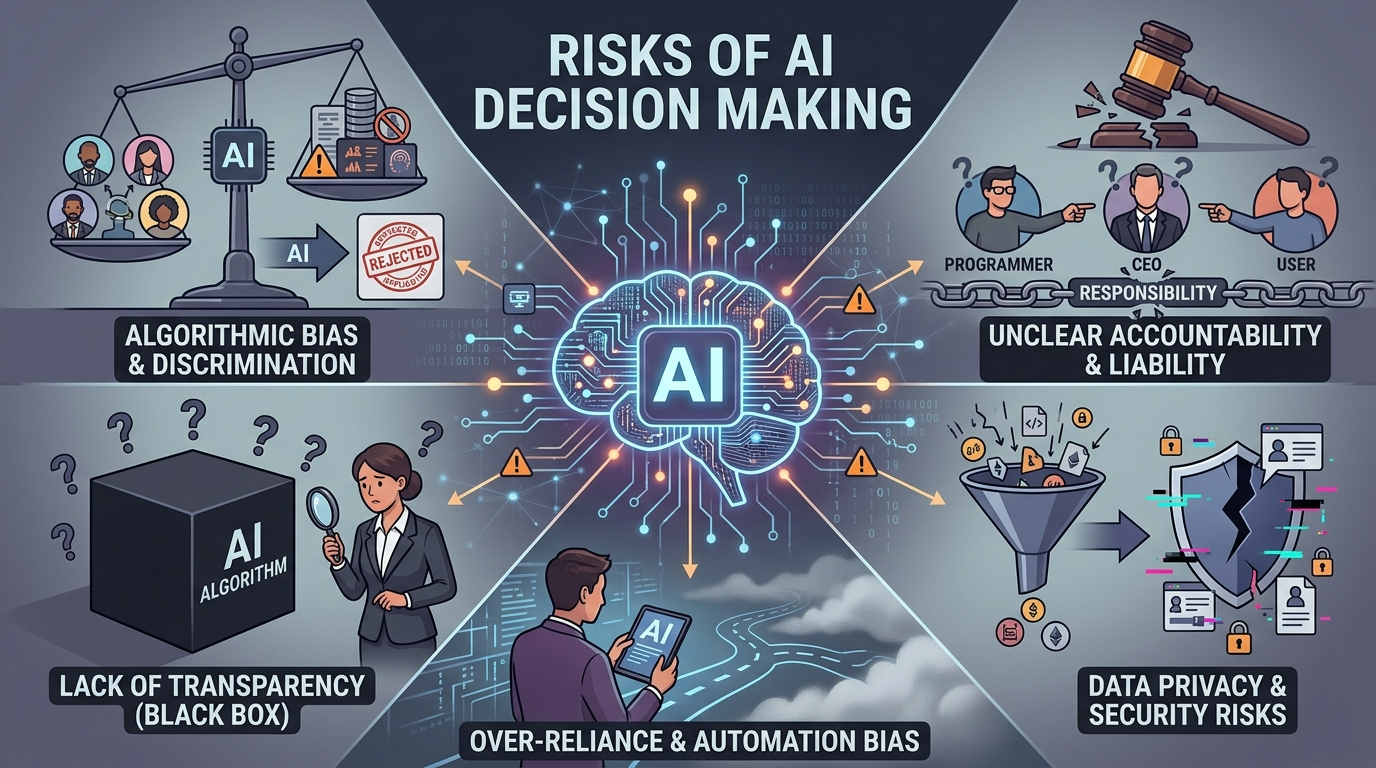

Common Types of AI Decision Risks

Organizations implementing artificial intelligence must recognize several key categories of AI decision making risks.

Understanding these categories helps businesses design effective mitigation strategies.

Algorithmic Bias

Algorithmic bias occurs when AI systems produce unfair outcomes.

Biased datasets or flawed model training processes often cause these issues.

Organizations must regularly audit models to detect discriminatory patterns.

Reducing bias remains a major priority in addressing AI decision making risks.

Lack of Transparency

Many AI systems rely on complex neural networks that provide limited interpretability.

When organizations cannot explain algorithmic decisions, trust decreases.

Transparent design improves accountability and supports ethical AI deployment.

Data Privacy Violations

AI systems often require large amounts of personal data.

Improper data handling may violate privacy regulations.

Organizations must ensure strong data governance practices to minimize AI decision making risks related to privacy.

Automation Overreliance

Excessive reliance on automated decisions can create operational vulnerabilities.

Human oversight remains essential for evaluating complex situations.

Combining automation with expert judgment reduces system errors.

Strategies for Managing AI Decision Risks

Organizations must develop comprehensive frameworks to control AI decision making risks effectively.

Successful risk management combines technical safeguards with organizational governance.

Establish Responsible AI Governance

Companies should implement governance policies that guide AI development and deployment.

These policies define ethical standards, accountability structures, and review procedures.

Strong governance helps organizations monitor AI decision making risks across all AI initiatives.

Implement Regular Model Auditing

Regular audits allow organizations to identify potential errors or bias in AI systems.

Audits evaluate model performance, fairness, and reliability.

Continuous monitoring ensures that automated systems maintain consistent performance.

Improve Data Quality Management

Data quality directly affects AI outcomes.

Organizations must validate datasets before training machine learning models.

High-quality data reduces prediction errors and improves reliability.

This approach helps minimize AI decision making risks caused by inaccurate data.

Ensure Human Oversight

Human oversight remains essential in AI-driven decision processes.

Experts can evaluate automated outputs and intervene when necessary.

Human-in-the-loop systems improve accountability and decision accuracy.

The Role of Transparency in Risk Management

Transparency plays a central role in controlling AI decision making risks.

Organizations must understand how AI models generate predictions.

Explainable AI techniques help developers interpret model behavior.

These tools provide insights into how algorithms process input data.

Transparent systems allow companies to identify potential issues earlier.

Additionally, transparency improves regulatory compliance.

Many governments now require organizations to explain automated decisions affecting individuals.

By prioritizing transparency, businesses strengthen trust and reduce operational uncertainty.

Consequently, transparency strategies form a critical part of modern AI governance.

Regulatory Considerations for AI Risk Management

Governments around the world increasingly focus on regulating artificial intelligence technologies.

These regulatory initiatives aim to reduce AI decision making risks and protect public interests.

For example, the European Union’s AI Act categorizes systems based on risk levels.

High-risk applications must meet strict transparency and documentation requirements.

Similarly, other regulatory frameworks emphasize fairness, accountability, and privacy.

Organizations deploying AI systems must remain aware of evolving regulations.

Compliance strategies should include regular risk assessments and documentation practices.

By aligning with emerging standards, businesses can manage AI decision making risks more effectively.

Regulatory compliance also strengthens consumer confidence in AI technologies.

Building an Ethical AI Culture

Technology alone cannot eliminate AI decision making risks.

Organizations must also cultivate a culture that prioritizes responsible AI development.

Leadership teams play an important role in establishing ethical standards.

Executives should encourage transparency, accountability, and continuous learning.

Training programs help employees understand the ethical implications of artificial intelligence.

Developers, analysts, and managers should learn how their decisions influence algorithmic outcomes.

Cross-disciplinary collaboration also strengthens ethical oversight.

Legal experts, data scientists, and business leaders must work together to evaluate AI systems.

When organizations embed ethics into their operations, they create stronger safeguards against potential risks.

Future Trends in AI Risk Governance

As artificial intelligence evolves, approaches to managing AI decision making risks will continue advancing.

New technologies and regulatory frameworks will shape future governance strategies.

One emerging trend involves explainable AI research.

Researchers are developing tools that make complex machine learning models easier to interpret.

Another trend includes automated monitoring systems.

These systems track model performance continuously and alert organizations when anomalies occur.

Additionally, global cooperation on AI standards is increasing.

International organizations are working toward shared guidelines for responsible AI development.

These initiatives aim to harmonize approaches to managing AI decision making risks across different regions.

Organizations that stay informed about these trends will remain better prepared for future regulatory and technological changes.

Conclusion

Artificial intelligence offers powerful opportunities for improving efficiency and decision-making across industries. However, automated systems also introduce new operational and ethical challenges.

By recognizing and addressing AI decision making risks, organizations can deploy artificial intelligence responsibly and effectively.

Risk management strategies should include governance policies, transparent system design, and continuous monitoring.

Organizations must also prioritize high-quality data and human oversight to ensure reliable outcomes.

Additionally, regulatory compliance and ethical leadership strengthen long-term trust in AI technologies.

As artificial intelligence continues shaping modern business environments, responsible risk management will remain essential.

Companies that proactively address risks will unlock the full potential of AI while protecting stakeholders and maintaining public confidence.

FAQ

1. What are the biggest risks associated with AI-driven decisions?

Common challenges include algorithmic bias, lack of transparency, poor data quality, and excessive reliance on automation.

2. How can organizations reduce bias in AI systems?

Companies can audit datasets, test algorithms regularly, and implement fairness evaluation tools.

3. Why is transparency important in automated decision systems?

Transparency allows organizations to explain how algorithms generate outcomes and identify potential errors.

4. Do governments regulate AI decision systems?

Yes. Many countries are developing regulations that focus on accountability, fairness, and responsible AI use.

5. Should humans always oversee automated decisions?

Yes. Human oversight helps validate AI predictions and ensures ethical decision-making processes.