Artificial intelligence continues reshaping industries across the world. Organizations now use AI systems for healthcare diagnostics, financial analysis, manufacturing automation, and customer service. However, as AI adoption accelerates, concerns about fairness, accountability, and transparency have grown significantly. Because of these concerns, global AI ethics regulations are becoming essential for guiding responsible development and deployment of artificial intelligence technologies.

Governments, international organizations, and regulatory bodies are establishing policies to ensure AI systems operate ethically and safely. These frameworks address issues such as bias, privacy protection, algorithmic transparency, and accountability.

For businesses, understanding global AI ethics regulations is now critical. Compliance not only reduces legal risk but also builds public trust in AI technologies.

Companies that follow ethical guidelines demonstrate responsible innovation while maintaining competitive advantage.

As artificial intelligence continues expanding into new sectors, ethical governance will remain a cornerstone of sustainable AI development.

Why AI Ethics Regulations Are Increasing Worldwide

Artificial intelligence offers enormous benefits. Yet, poorly designed AI systems can also create serious risks. These risks include algorithmic bias, privacy violations, and lack of accountability.

Because of these concerns, governments worldwide are introducing global AI ethics regulations to protect individuals and organizations.

These policies ensure that AI technologies respect human rights and operate transparently.

For example, regulators increasingly require companies to explain how automated systems make decisions.

Transparency allows individuals to understand how AI affects outcomes such as credit approvals or hiring decisions.

Furthermore, ethical frameworks encourage organizations to test systems for bias and fairness.

Through global AI ethics regulations, policymakers aim to create responsible innovation while preventing harmful outcomes.

Companies that understand these regulations can deploy AI technologies confidently and responsibly.

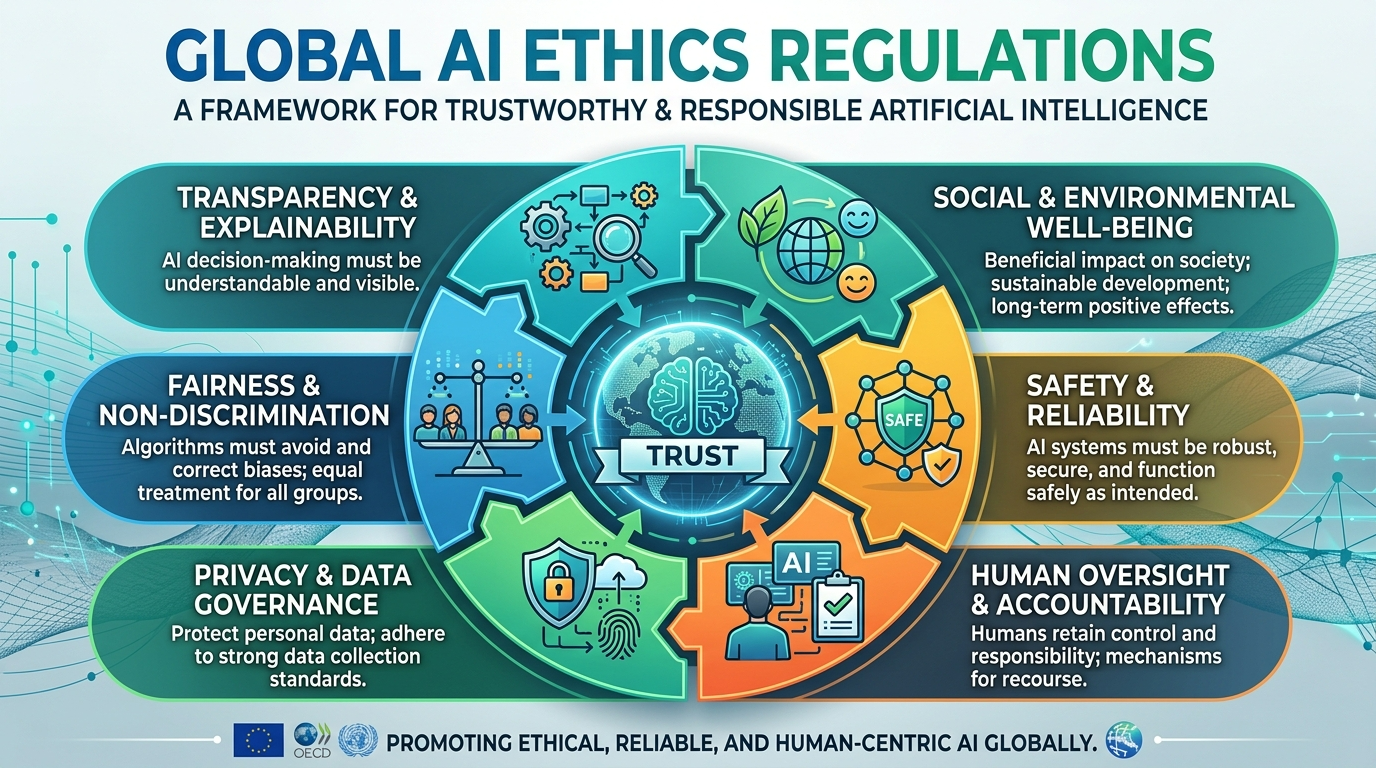

Key Principles Behind AI Ethics Policies

Although different regions have unique policies, most global AI ethics regulations follow similar ethical principles.

These principles guide how artificial intelligence systems should operate.

Transparency

AI systems should provide clear explanations for their decisions.

Transparency allows organizations to understand how algorithms process information.

Moreover, users should know when automated systems influence outcomes.

Transparent systems strengthen trust in AI technologies.

Accountability

Organizations must remain accountable for the actions of their AI systems.

Developers, operators, and companies share responsibility for system performance.

Accountability ensures that errors or harmful outcomes receive proper investigation.

This principle forms a core component of global AI ethics regulations.

Fairness and Non-Discrimination

AI systems must treat individuals fairly.

Algorithms should not produce biased outcomes based on race, gender, or socioeconomic factors.

Testing and auditing processes help identify potential discrimination.

Ethical regulations emphasize fairness across all AI applications.

Privacy Protection

Artificial intelligence often relies on large datasets.

Regulations require organizations to protect personal information carefully.

Privacy safeguards ensure responsible data collection and usage.

Major AI Ethics Regulations Around the World

Several major frameworks currently shape global AI ethics regulations.

These policies guide how companies design, deploy, and manage AI systems.

European Union AI Act

The European Union has developed one of the most comprehensive AI regulatory frameworks.

The EU AI Act categorizes AI systems based on risk levels.

High-risk systems require strict compliance measures, including transparency and documentation requirements.

This regulation plays a major role in shaping global AI ethics regulations.

OECD AI Principles

The Organization for Economic Cooperation and Development established AI principles adopted by many countries.

These guidelines promote responsible innovation and human-centered AI development.

The OECD framework influences policies across numerous governments.

United States AI Governance Initiatives

The United States currently emphasizes voluntary guidelines and risk management frameworks.

For example, the National Institute of Standards and Technology developed an AI Risk Management Framework.

These initiatives contribute to broader global AI ethics regulations discussions.

China’s AI Governance Framework

China has introduced policies emphasizing ethical AI development and algorithm transparency.

Chinese regulations focus heavily on content moderation and social stability.

These frameworks continue shaping the evolving global AI regulatory landscape.

How Businesses Can Prepare for AI Compliance

Organizations adopting artificial intelligence must prepare for evolving global AI ethics regulations.

Compliance strategies require proactive planning and governance.

Develop Internal AI Governance Policies

Companies should establish internal guidelines for responsible AI development.

These policies define standards for data use, fairness testing, and model transparency.

Strong governance programs help organizations align with global AI ethics regulations.

Implement Bias Detection and Testing

Algorithmic bias remains one of the most significant ethical concerns in AI systems.

Organizations should implement testing frameworks that detect unfair outcomes.

Regular auditing helps identify risks before they impact real-world decisions.

Maintain Transparent Documentation

Regulatory frameworks often require organizations to document AI development processes.

Companies should maintain clear records describing datasets, model design, and evaluation methods.

Transparent documentation supports compliance with emerging regulations.

Train Teams on Responsible AI Development

Employee training programs help teams understand ethical AI principles.

Developers, analysts, and managers should learn how regulations affect system design.

Training initiatives strengthen alignment with global AI ethics regulations.

Challenges in Implementing AI Ethics Policies

Although ethical frameworks provide valuable guidance, organizations often face challenges implementing global AI ethics regulations.

One challenge involves regulatory fragmentation.

Different countries may adopt different policies.

Companies operating internationally must navigate complex compliance requirements.

Another challenge involves technical complexity.

AI systems can be difficult to interpret.

Ensuring transparency requires advanced tools and expertise.

Additionally, ethical AI development requires multidisciplinary collaboration.

Engineers, legal experts, and policy specialists must work together.

Despite these challenges, organizations that prioritize ethical AI governance gain long-term advantages.

Proactive compliance strengthens both operational resilience and public trust.

The Future of AI Regulation

As artificial intelligence continues evolving, policymakers will likely expand global AI ethics regulations further.

Several trends suggest how these policies may develop.

First, governments may introduce stricter oversight for high-risk AI applications.

Systems used in healthcare, finance, and law enforcement will likely face stronger regulation.

Second, regulators may require more transparency regarding algorithmic decision-making.

Organizations may need to explain how AI models generate predictions.

Third, international cooperation will become increasingly important.

Countries may collaborate to develop shared ethical standards.

This cooperation would help harmonize global AI ethics regulations across jurisdictions.

Finally, technological innovation will influence regulatory frameworks.

As AI capabilities expand, policymakers must continually adapt ethical guidelines.

Organizations that monitor these developments remain better prepared for future compliance requirements.

Conclusion

Artificial intelligence offers transformative potential across industries. However, responsible development remains essential for maintaining trust and protecting society.

Through global AI ethics regulations, governments and international organizations aim to ensure that AI technologies operate transparently, fairly, and responsibly.

These frameworks address issues such as bias, accountability, privacy, and transparency.

For businesses, understanding ethical regulations is no longer optional. Compliance strategies must become part of every AI deployment plan.

Organizations that prioritize ethical governance gain both regulatory compliance and public trust.

Moreover, responsible AI development fosters innovation while minimizing risks.

As artificial intelligence continues shaping the global economy, ethical regulation will remain a central pillar of sustainable technology development.

Companies that align their strategies with evolving regulations will lead the next generation of responsible AI innovation.

FAQ

1. Why are governments introducing AI ethics regulations?

Governments aim to ensure that artificial intelligence systems operate fairly, transparently, and safely.

2. Which region currently leads in AI regulation?

The European Union has introduced one of the most comprehensive frameworks through its AI Act.

3. How can companies prepare for AI governance requirements?

Organizations should implement internal policies, transparency practices, and bias testing frameworks.

4. Do AI regulations apply to all industries?

Yes. However, high-risk sectors such as healthcare and finance often face stricter oversight.

5. Will AI regulations continue evolving?

Yes. As AI technologies advance, policymakers will continue refining ethical frameworks and compliance standards.